Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Data Transformation Tools for Data Engineers in 2026

Discover the top data transformation tools shaping the future for data engineers in 2026.

Introduction

As the landscape of data engineering evolves, the demand for effective data transformation tools continues to surge. This trend is driving innovation and efficiency across various industries. By 2026, data engineers will encounter a diverse array of platforms specifically designed to streamline workflows, enhance data quality, and facilitate real-time insights. However, with so many options available, organizations face the challenge of determining which tools are essential for their specific needs. This article explores ten pivotal data transformation tools that promise to reshape the operations of data engineers, offering insights into their unique features and the value they bring to modern data management.

Decube: Comprehensive Data Trust Platform for Transformation

Decube emerges as a leading trust platform designed for the AI era, offering a cohesive solution for observability, discovery, and governance. Its core offerings encompass:

- Cataloging

- Pipeline visibility

- Governance

- Information products

Notably, advanced features such as and significantly enhance .

The system's ensures , allowing for real-time updates without manual intervention. This feature is particularly advantageous for engineers working in AI and machine learning. As enterprises increasingly aim to improve their , Decube distinguishes itself as a preferred choice, especially within regulated industries where trust and compliance are critical.

Recently, Decube secured , raising its total funding to USD 5 million, which underscores its growth and solid market position. Jatin Solanki, the founder and CEO, emphasizes that "enterprises can't scale AI without a across their information," which highlights the mission of the system.

Furthermore, the market is projected to expand significantly by 2026, reflecting the that facilitate informed decision-making and operational efficiency. For instance, PT Superbank has effectively utilized Decube to ensure its information is ready for production use, demonstrating the system's efficiency in practical applications.

Matillion: Cloud-Native Data Transformation Powerhouse

Matillion stands out as a designed for , significantly streamlining the . It seamlessly integrates with various data sources, offering a user-friendly interface for constructing and managing . This capability allows organizations to implement changes directly in the cloud, enhancing both . Consequently, Matillion is particularly advantageous for businesses seeking to simplify their .

The platform's advanced features, such as automated data loading and processing, enable teams to concentrate on deriving valuable insights rather than grappling with infrastructure management. By 2026, the cloud-native market is projected to reach a substantial size, reflecting the growing demand for efficient integration solutions. Organizations that leverage Matillion for their es have reported notable improvements in operational efficiency and , underscoring the platform's effectiveness in addressing the challenges faced by data engineers.

Informatica: Reliable Data Integration and Transformation Tool

Informatica is recognized as a leading integration tool, providing a comprehensive solution for managing information and utilizing across various environments. The (IDMC) allows organizations to seamlessly connect, manage, and consolidate their data, ensuring reliability and credibility in decision-making processes.

Key features of IDMC encompass:

- Advanced

- Robust

These features are crucial for organizations aiming to maintain high data quality, especially as 57% of leaders identify as a significant barrier to advancing AI initiatives from pilot phases to full production.

Furthermore, Informatica's scalable and adaptable architecture caters to organizations of all sizes, particularly those navigating complex data environments. As organizations increasingly emphasize data quality, the enhancements of the IDMC platform, such as for real-time and automated governance, are vital for facilitating effective transformation and informed decision-making.

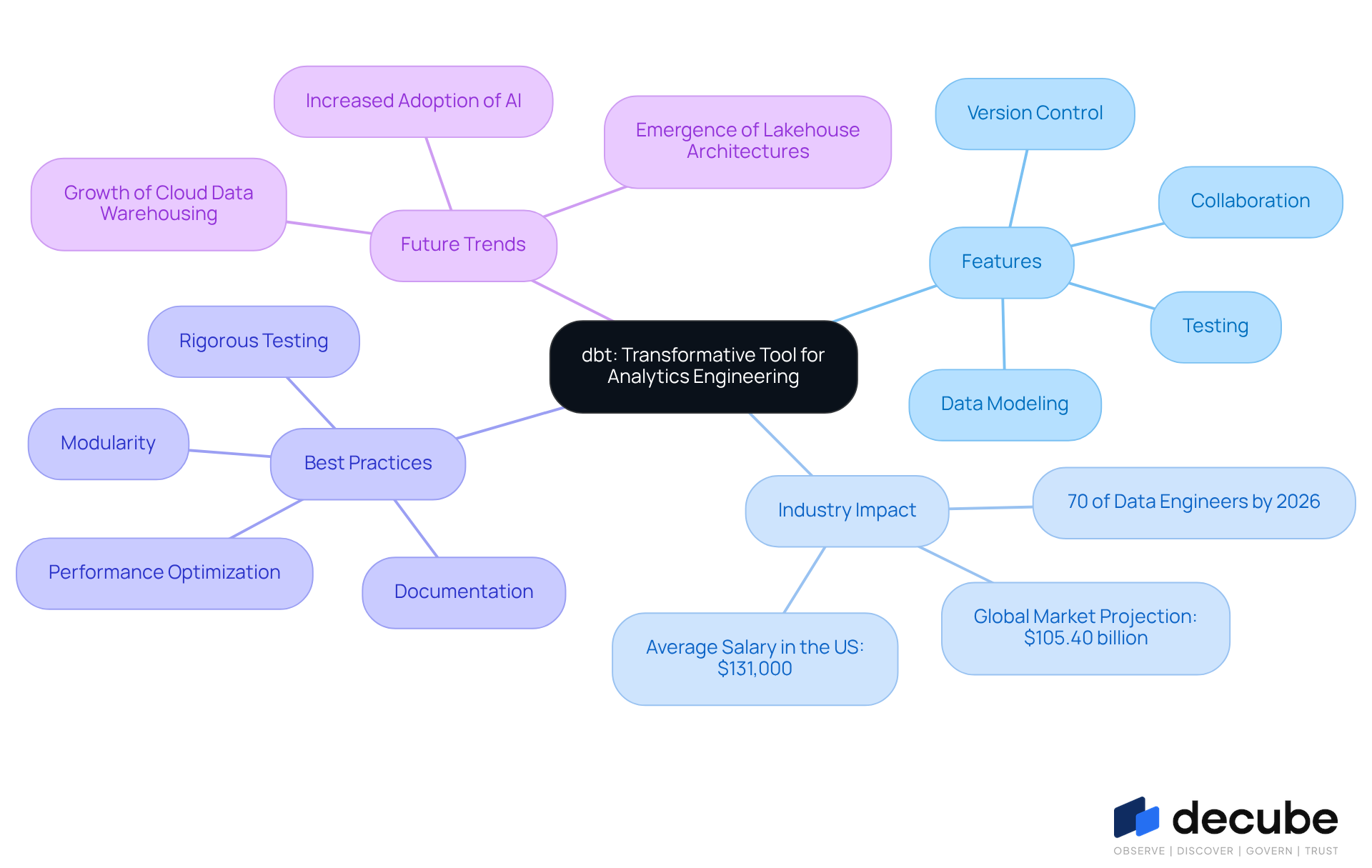

dbt: Transformative Tool for Analytics Engineering

dbt (data build tool) is a pivotal that enables teams to transform raw data into actionable insights. It promotes best practices in , facilitating the development of modular SQL models within storage systems. By 2026, over 70 percent of data engineers are expected to rely on like dbt, underscoring its critical role in the industry. Its emphasis on enhances the reliability of data transformations and fosters a culture of shared ownership among teams. Furthermore, dbt's seamless integration with various data platforms allows organizations to uphold high-quality standards while streamlining their . As analytics engineering continues to evolve, embracing can lead to , making it a preferred choice for engineers navigating the complexities of modern data environments.

Alteryx: User-Friendly Data Preparation and Transformation

Alteryx is recognized as one of the premier data transformation tools for data preparation, specifically engineered to streamline the automation of complex workflows. Its intuitive drag-and-drop interface accommodates both technical and non-technical users, promoting , cleansing, and analysis. In 2026, organizations utilizing Alteryx reported notable enhancements in productivity, with . This growth underscores the system's capability to .

In addition to Alteryx's , Decube's unified information trust platform further amplifies these functionalities by providing and enhanced observability. This integration empowers organizations to derive deeper insights from their data while ensuring quality and governance. The introduction of generative AI capabilities in Alteryx enables users to interact with data using natural language, thereby simplifying the analytics process. As organizations increasingly prioritize automation, the combined strengths of Alteryx and Decube establish them as essential that strive to facilitate informed decision-making and optimize the value of their information assets.

Apache Airflow: Orchestrating Data Transformation Workflows

Apache Airflow is a robust open-source platform that enables engineers to programmatically author, schedule, and monitor workflows. By defining complex information pipelines as Directed Acyclic Graphs (DAGs), Airflow facilitates the efficient orchestration of . Its scalability and flexibility position it as an ideal choice for managing workflows across diverse information environments.

With , Airflow reflects , bolstered by contributions from more than 3,600 developers. This robust community enhances its capabilities and integration options. Organizations such as Autodesk utilize Airflow to modernize and stabilize . Nick Wilson from Autodesk has praised Astronomer for its stability and advanced capabilities.

Furthermore, a notable 89% of users anticipate leveraging Airflow for more revenue-generating solutions by the year 2027. As information operations become increasingly complex, such as Airflow emerge as vital for and ensuring timely delivery. Importantly, over half of respondents from large enterprises indicated that Airflow is , underscoring its relevance in enterprise settings.

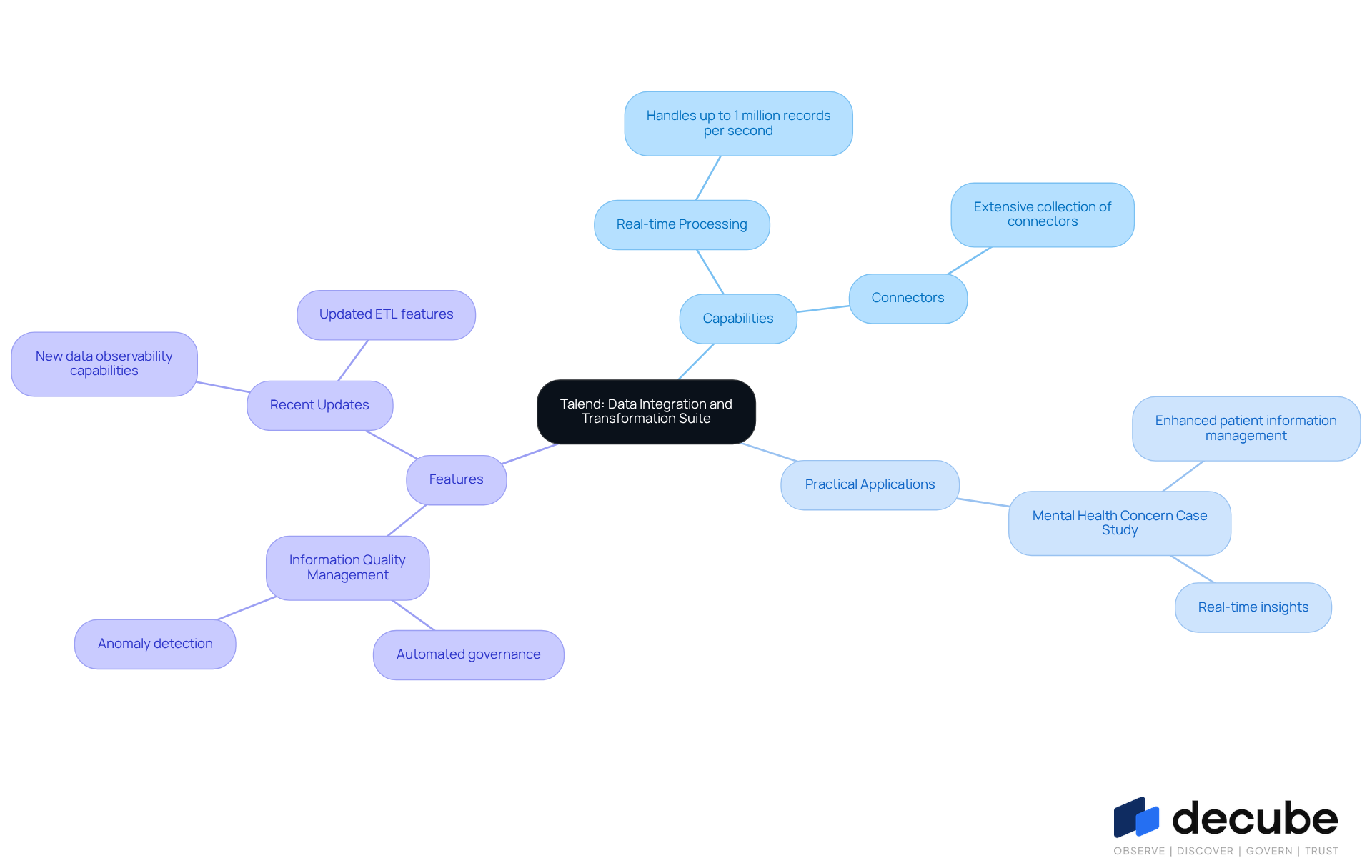

Talend: Versatile Data Integration and Transformation Suite

Talend is recognized as a powerful suite of , specifically designed to facilitate seamless connections, transformations, and management of information from diverse sources. Its intuitive interface, complemented by an extensive collection of connectors, simplifies the creation of robust information pipelines. Notably, in 2026, Talend's are particularly impressive, with the system able to manage up to one million records per second. This capability ensures that organizations can respond swiftly to changing information environments, which is crucial for maintaining high and operational efficiency.

Organizations such as Mental Health Concern have effectively leveraged Talend to enhance patient through real-time insights, demonstrating the platform's efficiency in practical applications. Talend's , which include , empower teams to maintain integrity while minimizing errors. Recent updates to the Talend modification suite further enhance its functionality, offering advanced features that streamline workflows and promote collaboration among teams. With its comprehensive approach to , Talend remains one of the for engineers seeking to optimize their processing workflows.

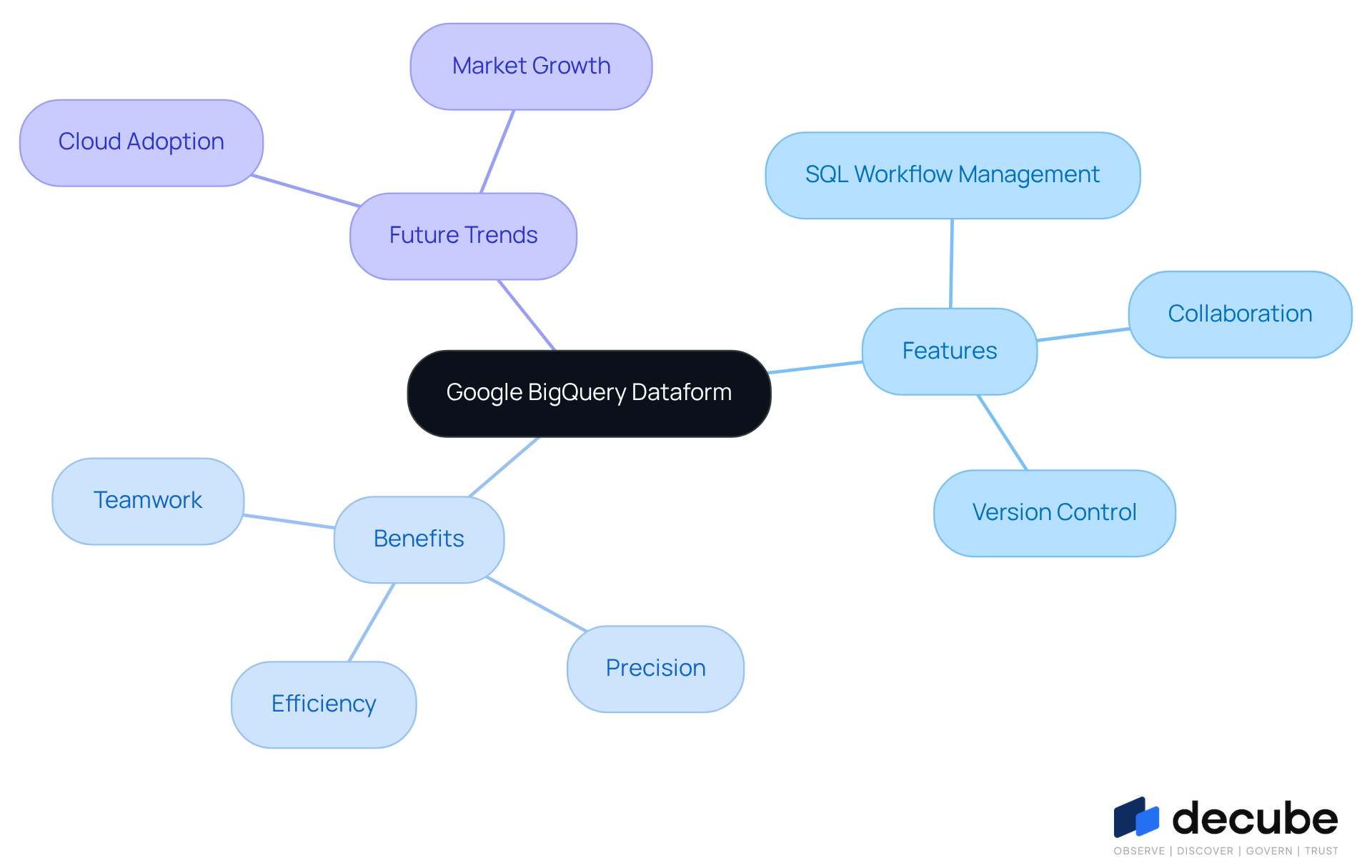

Google BigQuery Dataform: Cloud-Based Transformation Management

Google BigQuery Dataform is a cloud-native platform designed to streamline the management of SQL-based modifications within BigQuery. It empowers teams to define, test, and schedule complex SQL workflows, thereby ensuring . With its robust integration with BigQuery, businesses can fully leverage the for their information processing needs.

By 2026, the is projected to expand significantly, with over 85% of companies adopting . and version control makes it an indispensable tool for engineers operating in and enhancing project efficiency.

Notably, organizations utilizing Dataform have reported , underscoring its effectiveness in modern information workflows.

Fivetran: Automated Data Integration for Seamless Transformation

Fivetran serves as an automated integration platform that revolutionizes the transfer of information from various sources into storage systems. With an extensive selection of , Fivetran allows organizations to initiate swiftly and efficiently. This comprehensive library of connectors significantly reduces the time required for onboarding sources, compressing timelines from months to mere days. By utilizing to automate the integration process, Fivetran ensures that information remains current and reliable, enabling teams to concentrate on analysis rather than the complexities of management.

Companies utilizing Fivetran report that their allow for that are three to five times faster than traditional methods, underscoring the platform's efficiency in facilitating seamless information transformation. Furthermore, the execution of information agreements within this framework fosters collaboration among stakeholders, ensuring that the transformed information is not only reliable but also aligned with organizational standards. This alignment enhances overall quality and trust in the data.

As George Fraser, CEO of Fivetran, articulates, 'AI only delivers value when the underlying information is when it’s needed.' This statement highlights the critical importance of dependable like Fivetran, especially considering that pipeline failures can incur an average cost of $3 million monthly for enterprises.

WhereScape: Automating Data Warehouse Development and Transformation

WhereScape stands out as a premier , revolutionizing the development of storage solutions through its sophisticated automation capabilities. By leveraging , organizations can automate up to 95% of coding, which significantly minimizes the time and resources needed for warehouse projects. This approach not only streamlines but also enhances their reliability.

In 2026, WhereScape's robust automation features are crucial for organizations aiming to strengthen their information infrastructure and boost workflow efficiency. Companies utilizing WhereScape have reported notable improvements in their , underscoring the system's pivotal role in modern information transformation efforts.

As Paul Watson-Gover, a senior solutions architect at WhereScape, articulates, " is much more than simply automating the development process. It encompasses all of the core processes of , deployment, operations, impact analysis, and change management.

Conclusion

In conclusion, the landscape of data transformation tools is evolving rapidly, with several platforms emerging as essential assets for data engineers in 2026. Tools such as Decube, Matillion, Informatica, dbt, Alteryx, Apache Airflow, Talend, Google BigQuery Dataform, Fivetran, and WhereScape each offer unique features that cater to the diverse needs of organizations striving for efficient data management and transformation. By emphasizing automation, scalability, and data integrity, these tools collectively form a robust ecosystem that empowers data professionals to navigate the complexities of modern data environments.

Key insights highlight the strengths of each tool.

- Decube stands out for its comprehensive governance and observability features.

- Matillion excels in cloud-native ETL processes.

- Informatica offers reliable data integration capabilities.

- dbt promotes best practices in analytics engineering.

- Alteryx enhances data preparation through user-friendly interfaces.

- Apache Airflow orchestrates workflows effectively.

- Talend's real-time processing.

- Google BigQuery Dataform's cloud capabilities.

- Fivetran's automated integration.

- WhereScape's automation in data warehousing further illustrate the breadth of options available to data engineers.

As organizations continue to prioritize data-driven decision-making, the significance of adopting these transformative tools cannot be overstated. Investing in the right data transformation solutions will streamline workflows and enhance data quality and accessibility, ultimately driving better business outcomes. Data engineers are encouraged to explore these essential tools and leverage their capabilities to foster innovation and efficiency within their teams, ensuring they remain at the forefront of the data revolution.

Frequently Asked Questions

What is Decube and what are its main features?

Decube is a comprehensive data trust platform designed for the AI era, offering solutions for information observability, cataloging, pipeline visibility, governance, and information products. It includes advanced features like automated column-level lineage mapping and machine learning-powered anomaly detection.

How does Decube enhance information management?

Decube enhances information management through its automated crawling capability, which ensures efficient metadata management with real-time updates without manual intervention. This is particularly beneficial for engineers in AI and machine learning.

What recent funding has Decube received?

Decube recently secured USD 3 million in its latest funding round, raising its total funding to USD 5 million, indicating its growth and solid market position.

How does Decube support enterprises in scaling AI?

According to Jatin Solanki, the founder and CEO, Decube provides a trusted context layer across information, which is essential for enterprises to scale their AI initiatives effectively.

What is the projected growth of the information observability market?

The information observability market is projected to expand significantly by 2026, reflecting the increasing demand for robust information management solutions that support informed decision-making and operational efficiency.

How has PT Superbank utilized Decube?

PT Superbank has effectively used Decube to ensure its information is ready for production use, demonstrating the platform's efficiency in practical applications.

What is Matillion and what does it offer?

Matillion is a cloud-native platform designed for data transformation, streamlining the ETL process and integrating seamlessly with various data sources. It offers a user-friendly interface for managing data pipelines directly in the cloud.

What are the benefits of using Matillion for ETL processes?

Matillion allows organizations to enhance performance and scalability, automate data loading and processing, and focus on deriving valuable insights instead of managing infrastructure, leading to improvements in operational efficiency and data quality.

What is Informatica known for?

Informatica is recognized as a leading integration tool that provides a comprehensive solution for managing information and utilizing data transformation tools across various environments through its Intelligent Data Management Cloud (IDMC).

What key features does Informatica's IDMC offer?

IDMC includes advanced data quality management, robust data governance, and real-time integration capabilities, which are crucial for maintaining high data quality and supporting effective transformation.

Why is data reliability important for organizations?

Data reliability is significant because 57% of leaders identify it as a major barrier to advancing AI initiatives from pilot phases to full production, highlighting the need for trustworthy data management solutions.

List of Sources

- Decube: Comprehensive Data Trust Platform for Transformation

- Decube: $3 Million Raised To Build An Enterprise AI Data Context Layer (https://pulse2.com/decube-3-million-funding)

- Decube Raises USD 3 Million to Build Context Layer Powering Enterprise AI (https://finance.yahoo.com/news/decube-raises-usd-3-million-025100574.html)

- Decube raises US$3 mil to build context layer powering enterprise AI (https://digitalnewsasia.com/startups/decube-raises-us3-mil-build-context-layer-powering-enterprise-ai)

- Matillion: Cloud-Native Data Transformation Powerhouse

- Matillion Launches Data Integration Platform Natively on Snowflake Marketplace - BigDATAwire (https://hpcwire.com/bigdatawire/this-just-in/matillion-launches-data-integration-platform-natively-on-snowflake-marketplace)

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Precisely and Matillion Collaborate to Hasten Data Modernization and Agentic AI Readiness (https://dbta.com/Editorial/News-Flashes/Precisely-and-Matillion-Collaborate-to-Hasten-Data-Modernization-and-Agentic-AI-Readiness-173822.aspx)

- Matillion recognized as a Challenger by the Gartner® Magic Quadrant™ (https://matillion.com/news/gartner-magic-quadrant-2025-3)

- Precisely and Matillion Partner to Accelerate Data Modernization and Agentic AI Readiness (https://finance.yahoo.com/news/precisely-matillion-partner-accelerate-data-130000103.html)

- Informatica: Reliable Data Integration and Transformation Tool

- Informatica builds deeper Microsoft integration to aid service providers (https://iteuropa.com/news/informatica-builds-deeper-microsoft-integration-aid-service-providers)

- Salesforce (Informatica) Named a Leader in the 2026 Gartner® Magic Quadrant™ for Augmented Data Quality Solutions for the 18th Time (https://informatica.com/about-us/news/news-releases/2026/02/20260217-salesforce-informatica-named-a-leader-in-the-2026-gartner-magic-quadrant-for-augmented-data-quality-solutions-for-the-18th-time.html)

- Informatica Named a Leader in 2026 Gartner® Magic Quadrant™ for Data & Analytics Governance Platforms (https://informatica.com/about-us/news/news-releases/2026/01/20260109-informatica-named-a-leader-in-2026-gartner-magic-quadrant-for-data-and-analytics-governance-platforms.html)

- Informatica and Microsoft Boost AI and Analytics with Enhanced Data Integration Capabilities - TechAfrica News (https://techafricanews.com/2026/03/24/informatica-and-microsoft-boost-ai-and-analytics-with-enhanced-data-integration-capabilities)

- New Global CDO Report Reveals Data Governance and AI Literacy as Key Accelerators in AI Adoption (https://informatica.com/about-us/news/news-releases/2026/01/20260127-new-global-cdo-report-reveals-data-governance-and-ai-literacy-as-key-accelerators-in-ai-adoption.html)

- dbt: Transformative Tool for Analytics Engineering

- 9 Must-read Inspirational Quotes on Data Analytics From the Experts (https://nisum.com/nisum-knows/must-read-inspirational-quotes-data-analytics-experts)

- dbt Best Practices Guide: Mastering Data Transformation in 2026 2026 (https://successknocks.com/dbt-best-practices-guide-mastering-data)

- Data Engineering Stats 2026: Latest Market Insights & Trends (https://data.folio3.com/blog/data-engineering-stats)

- Latest product news and updates from dbt Labs (https://getdbt.com/blog/category/product-news)

- Alteryx: User-Friendly Data Preparation and Transformation

- Alteryx Accelerates its Next Phase of Growth with AI-Ready Data and Automation at Enterprise Scale (https://prnewswire.com/news-releases/alteryx-accelerates-its-next-phase-of-growth-with-ai-ready-data-and-automation-at-enterprise-scale-302707688.html)

- New Alteryx Research Highlights Trust and Data as Keys to Scaling AI Pilots - Alteryx (https://alteryx.com/about-us/newsroom/press-release/new-alteryx-research-highlights-trust-and-data-as-keys-to-scaling-ai-pilots)

- Alteryx Accelerates its Next Phase of Growth with AI-Ready Data and Automation at Enterprise Scale (https://finance.yahoo.com/news/alteryx-accelerates-next-phase-growth-130000112.html)

- Alteryx Accelerates its Next Phase of Growth with AI-Ready Data and Automation at Enterprise Scale - Alteryx (https://alteryx.com/about-us/newsroom/press-release/alteryx-accelerates-its-next-phase-of-growth-with-ai-ready-data-and-automation-at-enterprise-scale)

- Apache Airflow: Orchestrating Data Transformation Workflows

- State of Airflow 2026: The Orchestration Layer is Uniting Data, AI, and Enterprise Growth (https://astronomer.io/blog/state-of-airflow-2026)

- Astronomer Releases State of Apache Airflow® 2026 Report (https://finance.yahoo.com/news/astronomer-releases-state-apache-airflow-140000349.html)

- Astronomer Releases State of Apache Airflow® 2026 Report (https://astronomer.io/press-releases/astronomer-releases-state-of-apache-airflow-2026-report)

- Astronomer Releases State of Apache Airflow® 2026 Report (https://prnewswire.com/news-releases/astronomer-releases-state-of-apache-airflow-2026-report-302667480.html)

- Talend: Versatile Data Integration and Transformation Suite

- Talend in the News: Get the Latest on Talend and Data (https://talend.com/about-us/news)

- Talend Takes on High-Volume Data Integration | InformationWeek (https://informationweek.com/it-sectors/talend-takes-on-high-volume-data-integration)

- Talend News Articles (https://talend.com/about-us/articles)

- Talend Press Releases (https://talend.com/about-us/press-releases)

- Google BigQuery Dataform: Cloud-Based Transformation Management

- Dataform News & Updates for March 2026 (https://web.swipeinsight.app/topics/dataform)

- Latest Updates on Google Data Analytics (January 2026) (https://medium.com/@datadice/latest-updates-on-google-data-analytics-january-2026-f1ac96386982)

- Dataform release notes | Google Cloud Documentation (https://docs.cloud.google.com/dataform/docs/release-notes)

- 100+ Cloud Computing Statistics for 2026 | Complete Report (https://softjourn.com/insights/cloud-computing-stats)

- Fivetran: Automated Data Integration for Seamless Transformation

- Fivetran Selected by WM New Zealand to Power an AI-Ready Data Foundation - BigDATAwire (https://hpcwire.com/bigdatawire/this-just-in/fivetran-selected-by-wm-new-zealand-to-power-an-ai-ready-data-foundation)

- Fivetran Selected by WM New Zealand to Power an AI-Ready Data Foundation | Press | Fivetran (https://fivetran.com/press/fivetran-selected-by-wm-new-zealand-to-power-an-ai-ready-data-foundation)

- Tredence Named Fivetran 2026 Consulting Rising Star Partner of the Year (https://tredence.com/blog/tredence-named-fivetran-2026-consulting-rising-star-partner-of-the-year)

- WhereScape: Automating Data Warehouse Development and Transformation

- WhereScape at Big Data & AI World London 2026 (https://wherescape.com/events/big-data-ai-world-2026-london)

- Top Content on LinkedIn (https://linkedin.com/pulse/united-states-data-warehouse-automation-itaac)

- Modern Data Architecture in Practice with NetApp Instaclustr, WhereScape, and Hydrolix (https://dbta.com/Editorial/News-Flashes/Modern-Data-Architecture-in-Practice-with-NetApp-Instaclustr-WhereScape-and-Hydrolix-173605.aspx)

- Data Warehouse Automation Software Market Fueled by Rising Demand for Faster Data Integration, Cloud Adoption, and AI-Driven Analytics Workflows: Market Research Intellect (https://prnewswire.com/news-releases/data-warehouse-automation-software-market-fueled-by-rising-demand-for-faster-data-integration-cloud-adoption-and-ai-driven-analytics-workflows-market-research-intellect-302682536.html)

_For%20light%20backgrounds.svg)