Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Data Validation Types for Reliable Data Pipelines

Discover key data validation types essential for ensuring reliable data pipelines and quality control.

Introduction

In an era where data informs critical decisions, ensuring the integrity of that data is essential. Organizations are increasingly adopting robust data validation techniques to guarantee that their pipelines are not only functional but also reliable and compliant. This article explores ten fundamental types of data validation that can significantly enhance data management practices. Effective validation minimizes errors and elevates overall data quality. As companies pursue operational excellence, the pressing question is: how can they implement these validation types to protect their data from inaccuracies and inconsistencies?

Decube: Advanced Data Validation for Modern Data Stacks

Decube provides a comprehensive suite of advanced information validation tools tailored for modern systems. These tools include:

- Machine learning-powered anomaly detection

By integrating these capabilities, Decube guarantees the efficient operation of and proactively addresses quality issues. Users commend Decube for its and the clarity it brings to , which facilitates collaboration among teams.

This holistic approach not only enhances information integrity but also ensures :

- SOC 2

- ISO 27001

- HIPAA

- GDPR

In 2026, the significance of is underscored by its ability to improve and enable reliable analytics, establishing it as a vital component for enterprises aiming to leverage AI effectively.

Industry leaders emphasize that aligning information governance with business objectives is essential for unlocking strategic value and maximizing ROI. Furthermore, the continuous improvement of governance practices is crucial as new information sources and business KPIs emerge, ensuring organizations remain adaptable and responsive in a rapidly evolving information landscape.

Decube's enhances metadata management, ensuring that once sources are linked, they are auto-refreshed, which boosts information observability and governance. Additionally, the end-to-end allows users to monitor the complete flow of information across components, further strengthening and governance initiatives.

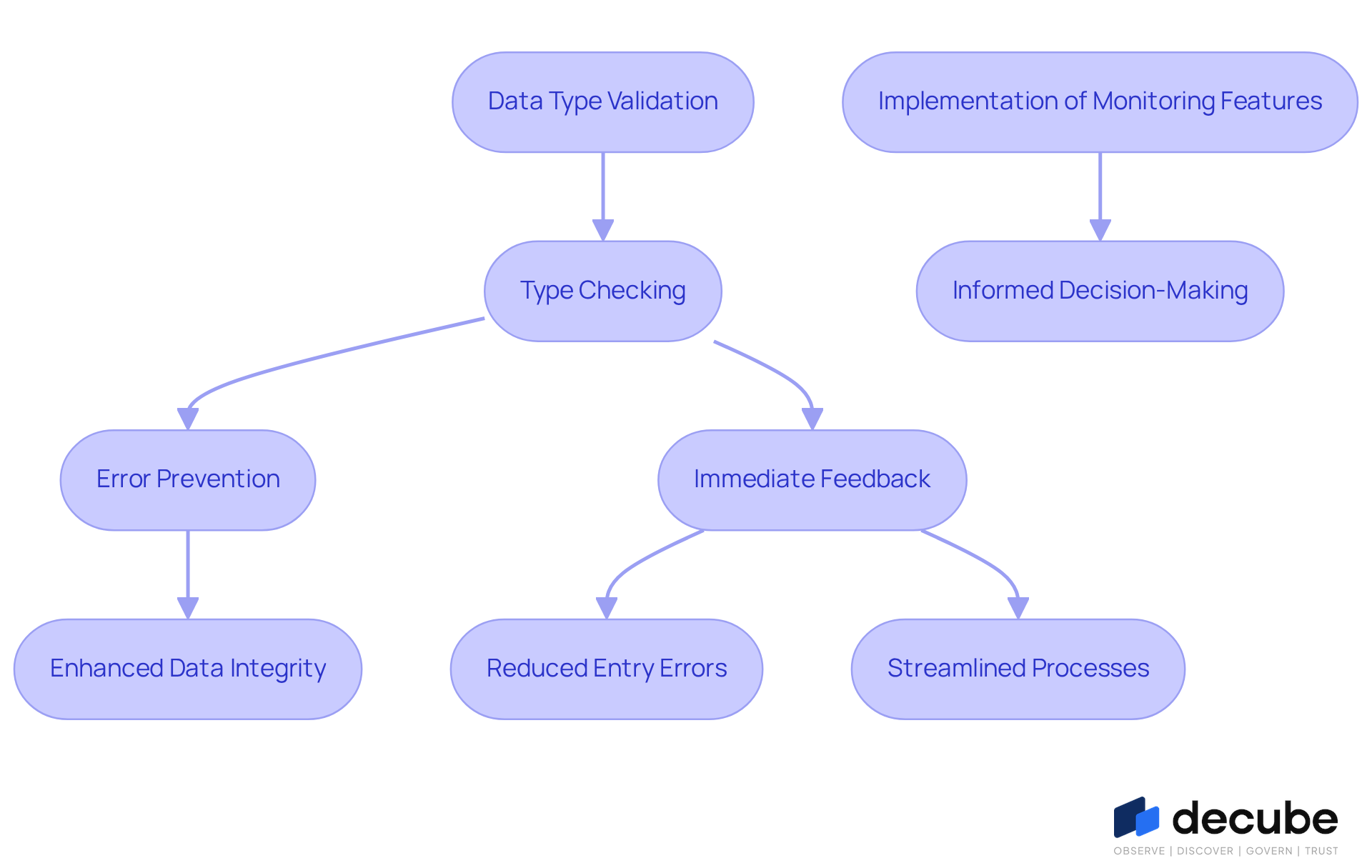

Data Type Validation: Ensuring Correct Data Formats

Type checking is essential for ensuring that the information entered conforms to the required formats for each field, such as integers, strings, or dates. This verification is vital for preventing errors that could disrupt processing and analysis. For example, if a field is designed to accept a date in the format YYYY-MM-DD but receives a string instead, it can lead to significant downstream issues, including incorrect interpretations and flawed analytics. Organizations that implement stringent type checking protocols can greatly enhance their , ensuring that all entries are accurate and functional.

As we approach 2026, the importance of will continue to grow, making data verification crucial in minimizing entry errors by providing immediate feedback to users during input. Companies like Tide have demonstrated the effectiveness of strict , transforming their management processes and achieving compliance with regulatory standards. By prioritizing , institutions can reduce errors, streamline processes, and foster a culture of accountability regarding .

With Decube's advanced , including preset field monitors that support 12 test types such as null%, regex_match, and cardinality, as well as machine learning-powered tests that automatically detect quality thresholds and smart alerts that reduce notification overload, organizations can significantly improve their governance and observability. As noted, "If 2026 focuses on efficiency, .

Range Validation: Verifying Data Within Specified Limits

Range checks are essential for ensuring that numerical values adhere to predefined limits, thereby safeguarding accuracy. For example, an age field should only accept values between 0 and 120; any entry outside this range must be flagged as invalid. This verification approach is crucial in preventing incorrect information from infiltrating systems, which can lead to flawed analyses and misguided decisions.

By 2026, will be recognized as a vital first-line defense rather than merely a cleanup activity, underscoring the significance of range checks as organizations increasingly acknowledge their role in proactive information management. Efficient should occur at the point of entry to avert mistakes, utilizing clear thresholds for input, dropdown menus for selection, and real-time checks to promptly notify users of incorrect submissions.

Successful organizations, such as financial institutions and healthcare providers, conduct routine verification checks with to prevent information degradation, ensuring that only accurate and trustworthy information is captured. With Decube's integrated platform for , which includes features like lineage tracking, organizations can enhance their , ensuring that . By adopting robust supported by Decube, they not only bolster the integrity of their datasets but also improve operational efficiency and decision-making capabilities. As information precision becomes a cornerstone of business success, the importance of range verification will continue to grow. To further enhance your information verification efforts, consider incorporating within your entry processes.

Format Validation: Confirming Data Structure Compliance

ensure that entries conform to specified patterns or structures, which is essential for maintaining . For instance, a phone number field might require a specific format, such as (XXX) XXX-XXXX. Adhering to these defined formats is vital as it prevents inconsistencies and errors during information processing, which can result in significant issues in analytics. Organizations implementing not only enhance the reliability of their datasets but also ensure that all information is systematically organized for effective analysis.

In 2026, the importance of is underscored by its role in facilitating accurate information integration and reporting, as well as compliance with regulatory standards. Various industries, including financial services, have successfully adopted to standardize entries, thereby reducing errors and improving . By addressing common challenges, organizations can refine their and foster a culture of .

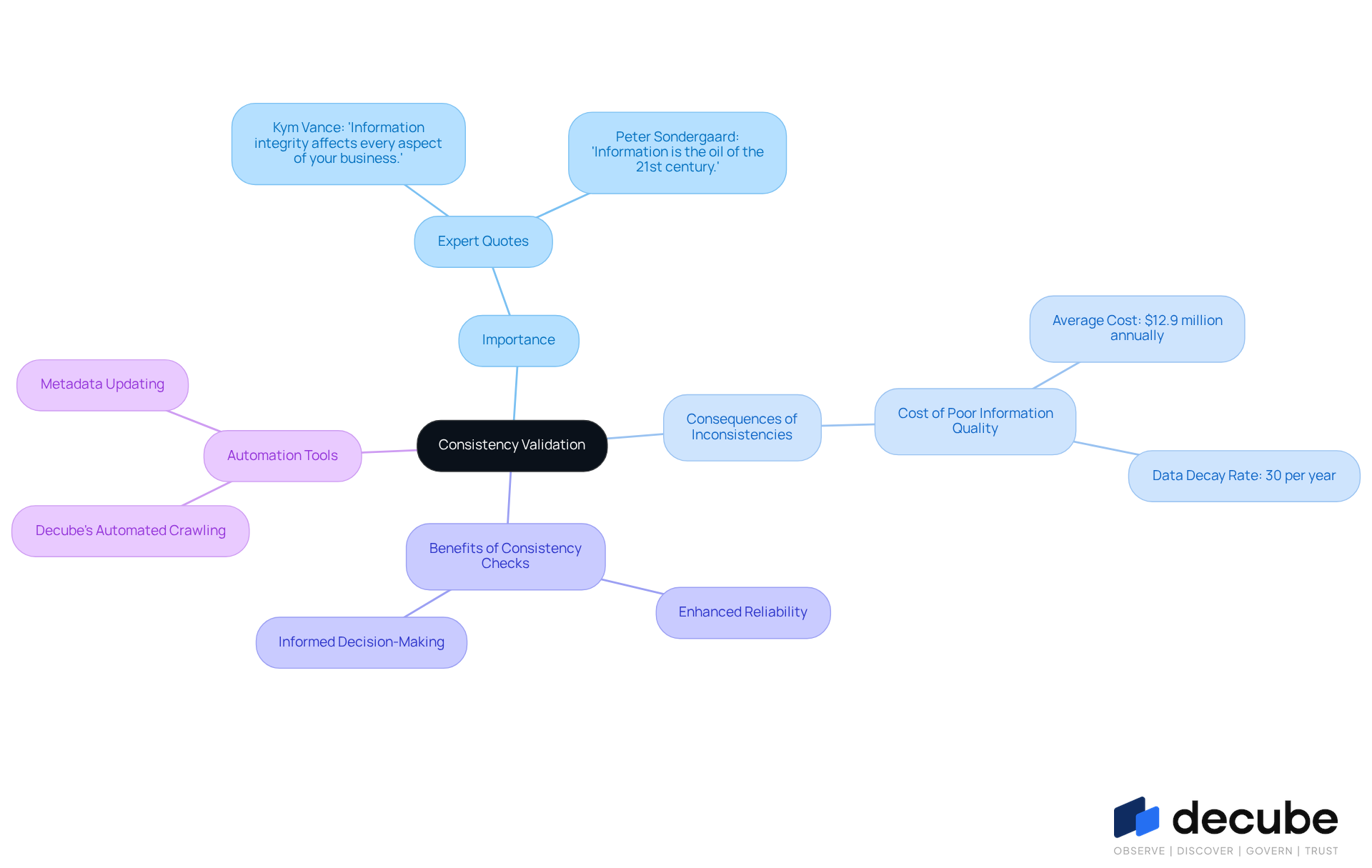

Consistency Validation: Maintaining Uniformity Across Data Sets

is essential for maintaining uniformity across various datasets. For example, if one dataset records a customer's name as 'John Doe' while another lists it as 'Doe, John', such inconsistencies can lead to confusion and reporting errors. Implementing allows organizations to identify and correct these discrepancies, ensuring that all entries are aligned. This practice not only enhances the reliability of information but also supports informed decision-making.

Experts emphasize that maintaining consistency in information entries is crucial; as Kym Vance noted, '.' Moreover, with , prioritizing can significantly improve information integrity, leading to better operational outcomes and increased confidence in data-driven decisions.

Furthermore, organizations that regularly perform can mitigate the effects of , ensuring their data remains accurate and trustworthy. With Decube's Automated Crawling feature, organizations can , ensuring that information stays consistent and current without manual intervention. This not only improves but also establishes secure access control, facilitating designated approval processes for who can view or edit content, thereby reinforcing robust governance.

Uniqueness Validation: Preventing Duplicate Entries

is crucial for ensuring that each entry in a dataset remains distinct, effectively preventing the creation of duplicate records. For instance, in a customer database, it is imperative that every email address is unique to uphold information integrity. Implementing uniqueness checks is essential, as it guarantees that analyses are based on accurate and non-repetitive information. This aspect is particularly vital for and marketing initiatives, where can result in miscommunication and operational inefficiencies.

Organizations that prioritize uniqueness verification have reported significant improvements in . Some have even experienced a 66% increase in revenue attributed to clean, enriched data. Maintaining is essential; can distort activity metrics and complicate reporting, ultimately undermining decision-making processes. As highlighted by the Prospeo Team, "Nothing kills credibility faster than two reps emailing the same buyer on the same day," underscoring the necessity of preventing duplicates.

Furthermore, individuals such as Piyush P. have praised Decube's Automated Column-Level lineage for enhancing cataloging and observability. This feature allows business users to quickly and dashboards. The seamless integration and intuitive monitoring provided by Decube emphasize the need for robust uniqueness assessment mechanisms, ensuring reliable and effective business strategies.

Presence Validation: Ensuring Required Data Fields Are Filled

Presence confirmation is a critical process that ensures all required fields in a dataset are completed prior to submission. For instance, when a form mandates users to provide their name and email address, guarantee that these fields are not left empty. This type of validation is vital for maintaining completeness and minimizing errors that may arise from missing information. As Veda Bawo emphasizes, is essential for effective tools, underscoring the necessity of completing all required fields to enhance information integrity.

Organizations such as [Example Organization 1] and [Example Organization 2] have successfully integrated into their , significantly improving the robustness of their datasets and making them more reliable for analysis. In 2026, the is underscored by the growing demand for accurate information collection methods, as companies increasingly rely on to support informed decision-making. By prioritizing presence verification, organizations can ensure their information pipelines are not only efficient but also capable of facilitating strategic insights.

Utilizing Decube's , including machine learning-powered tests that automatically detect quality thresholds and intelligent alerts that mitigate notification overload, organizations can enhance their presence verification processes. This integration ensures that all necessary fields are effectively monitored, contributing to overall and observability.

Code Validation: Verifying Data Against Defined Rules

Code verification is essential for ensuring that entries adhere to established rules or business logic. For example, when a field requires a specific product code, code verification guarantees that only are recognized, thereby maintaining the integrity of information. This type of is vital for ensuring that all entries are accurate and usable, which directly influences the reliability of datasets. Organizations that implement robust code assessment practices can significantly enhance their , leading to improved compliance with operational standards.

In 2026, the emphasis on will become even more pronounced, as businesses increasingly recognize its role in preventing costly errors and ensuring . By validating information against predefined guidelines, companies can mitigate risks associated with , which can range from 1% to 4% in manual processes. With Decube's unified platform for and governance, which includes features like lineage capability that illustrates the complete flow of data, organizations can effectively monitor quality. This ensures that their information remains accurate, consistent, and ready for decision-making, ultimately fostering better choices and enhancing operational efficiency.

Integrity Validation: Ensuring Data Accuracy and Reliability

is essential for ensuring that information is accurate and reliable by confirming the relationships between information elements. For instance, when a dataset includes a foreign key that points to another table, that the referenced information exists and is correct. This validation is crucial for maintaining and preventing errors from propagating through information pipelines. Organizations that implement integrity checks can significantly improve the reliability of their datasets, making them suitable for analysis.

In 2026, the emphasis on will be vital, as organizations recognize that poor information standards can lead to losses averaging between $9.7 million and $15 million annually. Companies that prioritize integrity assessments within their information pipelines are likely to see enhanced and reduced project failure rates, which can increase by as much as 60% due to standard issues. Notably, organizations that address first demonstrate 2.5 times greater transformation success rates, underscoring the importance of in achieving trustworthy analytics.

Furthermore, Decube's automated crawling feature streamlines this process by ensuring that metadata is updated automatically, facilitating seamless information observability and governance. With defined approval processes, Decube also provides , ensuring that only authorized individuals can view or modify critical information, thereby further supporting the integrity assurance process.

Business Rule Validation: Aligning Data with Organizational Standards

ensures that information entries adhere to organizational standards and business logic. For instance, if a company has a policy that discounts apply only to specific products, guarantees that only eligible items receive discounts. This validation is essential for maintaining compliance and enhancing information usage within the organization.

With Decube's , enterprises benefit from seamless metadata management, as it refreshes information sources automatically without the need for manual updates. This functionality improves information observability and governance, enabling secure access control where designated approval flows dictate who can view or edit details.

By implementing robust business rule checks alongside Decube's , enterprises can significantly enhance the reliability of their datasets, ensuring alignment with operational standards and fostering a culture of .

As companies navigate the complexities of 2026, aligning information with established business rules becomes increasingly critical for operational success and . Moreover, organizations in 2026 depend on structured, governed, high-quality data for operational efficiency, making a vital component of effective data management.

Conclusion

Ensuring the integrity and quality of data is essential for organizations that seek to excel in an increasingly data-driven environment. This article highlights ten critical types of data validation that form the foundation of reliable data pipelines, underscoring the vital role these validations play in maintaining data accuracy, compliance, and operational efficiency.

Key points include the significance of automated tools like Decube, which enhance data governance through real-time monitoring and machine learning capabilities. Each validation type - from type and range checks to uniqueness and business rule validations - serves a crucial function in preventing errors that could undermine data integrity. The integration of these methods not only streamlines data processing but also facilitates informed decision-making, ultimately leading to improved business outcomes.

As organizations prepare for the complexities of 2026, prioritizing robust data validation practices will be imperative. Implementing these essential validation types will safeguard data integrity and empower businesses to leverage their data effectively, ensuring compliance with regulatory standards and alignment with strategic goals. By adopting advanced validation techniques, organizations can cultivate a culture of accountability and precision, driving their success in an evolving information landscape.

Frequently Asked Questions

What is Decube and what does it offer?

Decube is a suite of advanced information validation tools designed for modern data systems. It includes automated information governance, real-time monitoring, and machine learning-powered anomaly detection to ensure the efficient operation of information pipelines and address quality issues proactively.

How does Decube enhance information governance?

Decube enhances information governance through automated crawling capabilities, which improve metadata management by auto-refreshing linked sources. It also provides end-to-end information lineage visualization, allowing users to monitor the complete flow of information across components, thus strengthening information quality and governance.

What industry standards does Decube help organizations comply with?

Decube helps organizations comply with various industry standards, including SOC 2, ISO 27001, HIPAA, and GDPR.

Why is automated information governance important for enterprises?

Automated information governance is important because it improves operational efficiency and enables reliable analytics, making it a vital component for enterprises looking to leverage AI effectively.

What is data type validation and why is it important?

Data type validation ensures that the information entered conforms to required formats for each field, such as integers or dates. It is important for preventing errors that could disrupt processing and analysis, thereby enhancing data integrity.

How does Decube assist with data type validation?

Decube provides advanced data quality monitoring features, including preset field monitors that support various test types and machine learning-powered tests that detect quality thresholds, improving governance and observability.

What is range validation and its significance?

Range validation verifies that numerical values adhere to predefined limits, which is crucial for maintaining accuracy and preventing incorrect information from entering systems. It is recognized as a vital first-line defense in proactive information management.

How can organizations improve their range verification practices?

Organizations can improve range verification practices by implementing real-time checks at the point of entry, using clear thresholds for input, dropdown menus for selection, and anomaly detection alerts to prevent information degradation.

What benefits do organizations gain by using Decube for information verification?

By using Decube, organizations can bolster the integrity of their datasets, improve operational efficiency, enhance decision-making capabilities, and ensure that only accurate and trustworthy information is captured throughout the information lifecycle.

List of Sources

- Decube: Advanced Data Validation for Modern Data Stacks

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- Why data quality is key to AI success in 2026 (https://strategy.com/software/blog/why-data-quality-is-key-to-ai-success-in-2026)

- Data Type Validation: Ensuring Correct Data Formats

- How to Win in 2026: Why Data Validation Matters More Than Ever (https://linkedin.com/pulse/how-win-2026-why-data-validation-matters-more-than-ever-mctit-xifzf)

- Data Validation: Meaning, Types, and Benefits (https://claravine.com/data-validation-meaning)

- Data Validation: Processes, Benefits & Types (2025) (https://atlan.com/what-is-data-validation)

- How to improve data quality: 10 best practices for 2026 (https://rudderstack.com/blog/how-to-improve-data-quality)

- Range Validation: Verifying Data Within Specified Limits

- How to Win in 2026: Why Data Validation Matters More Than Ever (https://linkedin.com/pulse/how-win-2026-why-data-validation-matters-more-than-ever-mctit-xifzf)

- File Data Preparation Efficiency Stats — 38 Critical Statistics Every Data Leader Should Know in 20 (https://integrate.io/blog/file-data-preparation-efficiency-stats)

- Statistical Methods for Error Detection and Data Cleaning:Ensuring Data Integrity (https://linkedin.com/pulse/statistical-methods-error-detection-data-integrity-kůlŝhřęŝŧhã--otlec)

- Data Accuracy: Definition, Importance, and Best Practices in 2026 - Persana AI (https://persana.ai/blogs/data-accuracy)

- 67 Data Entry Statistics For 2025 - DocuClipper (https://docuclipper.com/blog/data-entry-statistics)

- Format Validation: Confirming Data Structure Compliance

- Guide: How to improve data quality through validation and quality checks - data.org (https://data.org/guides/how-to-improve-data-quality-through-validation-and-quality-checks)

- Data Validation: Meaning, Types, and Benefits (https://claravine.com/data-validation-meaning)

- Consistency Validation: Maintaining Uniformity Across Data Sets

- 30 Data Hygiene Statistics for 2026 | PGM Solutions (https://porchgroupmedia.com/blog/data-hygiene-statistics)

- Data Transformation Challenge Statistics — 50 Statistics Every Technology Leader Should Know in 2026 (https://integrate.io/blog/data-transformation-challenge-statistics)

- Compelling Quotes About Data | 6sense (https://6sense.com/blog/compelling-quotes-about-data)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- Uniqueness Validation: Preventing Duplicate Entries

- 19 Inspirational Quotes About Data: Wisdom for a Data-Driven World (https://medium.com/@meghrajp008/19-inspirational-quotes-about-data-wisdom-for-a-data-driven-world-fcfbe44c496a)

- Common Data Quality Issues in 2026 (+ How to Fix Them) (https://prospeo.io/s/common-data-quality-issues)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- CRM Data Operations 2026: Statistics & Insights (https://digitaldiconsultants.com/crm-data-operations-statistics-2026)

- Presence Validation: Ensuring Required Data Fields Are Filled

- coresignal.com (https://coresignal.com/blog/data-science-quotes)

- Compelling Quotes About Data | 6sense (https://6sense.com/blog/compelling-quotes-about-data)

- 50 Quotes About Data & Analytics: More Than Just Numbers | RED² Digital (https://red2digital.com/en/quotes-about-data-analytics)

- A Continual Quest for Improving Data Quality | U.S. Bureau of Economic Analysis (BEA) (https://bea.gov/news/blog/2026-03-16/continual-quest-improving-data-quality)

- Code Validation: Verifying Data Against Defined Rules

- How to improve data quality: 10 best practices for 2026 (https://rudderstack.com/blog/how-to-improve-data-quality)

- The Wisdom of Code: 50 Quotes Every Developer Should Live By (https://tanmoykhanra.medium.com/the-wisdom-of-code-50-quotes-every-developer-should-live-by-62bc2a3955b8)

- CMS Delays the Start of the SNF Data Validation Process Timeline to January 2026 (https://ahcancal.org/News-and-Communications/Blog/Pages/CMS-Delays-the-Start-of-the-SNF-Data-Validation-Process-Timeline-to-January-2026-.aspx)

- Human Error in Data Entry: Statistics & Error Rates (2026) (https://parsli.co/blog/human-error-statistics)

- Integrity Validation: Ensuring Data Accuracy and Reliability

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- 2026 AI Predictions: Why Data Integrity Matters More Than Ever | Precisely (https://precisely.com/data-integrity/2026-ai-predictions-why-data-integrity-matters-more-than-ever)

- Data Engineering Stats 2026: Latest Market Insights & Trends (https://data.folio3.com/blog/data-engineering-stats)

- Data Transformation Challenge Statistics — 50 Statistics Every Technology Leader Should Know in 2026 (https://integrate.io/blog/data-transformation-challenge-statistics)

- Business Rule Validation: Aligning Data with Organizational Standards

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Regology (https://regology.com/blog/the-state-of-regulatory-compliance-in-2026-what-the-data-is-telling-us)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- 130+ Compliance Statistics & Trends to Know for 2026 (https://secureframe.com/blog/compliance-statistics)

- 101 Compliance Statistics for 2026 (https://spacelift.io/blog/compliance-statistics)

_For%20light%20backgrounds.svg)