Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Database Schema Examples for Data Engineers

Explore 10 essential database schema examples to enhance data management and engineering practices.

Introduction

The landscape of data engineering is evolving rapidly, with database schema design emerging as a crucial element in ensuring data integrity, compliance, and operational efficiency. As organizations face increasingly complex data structures, understanding various schema types - such as star, snowflake, and NoSQL - becomes essential for engineers aiming to optimize their data management practices.

However, challenges persist: how can data engineers navigate the intricacies of schema design while avoiding common pitfalls that could jeopardize data quality? This article explores ten essential database schema examples, highlighting their unique benefits and offering insights into best practices that can empower engineers to enhance their data frameworks effectively.

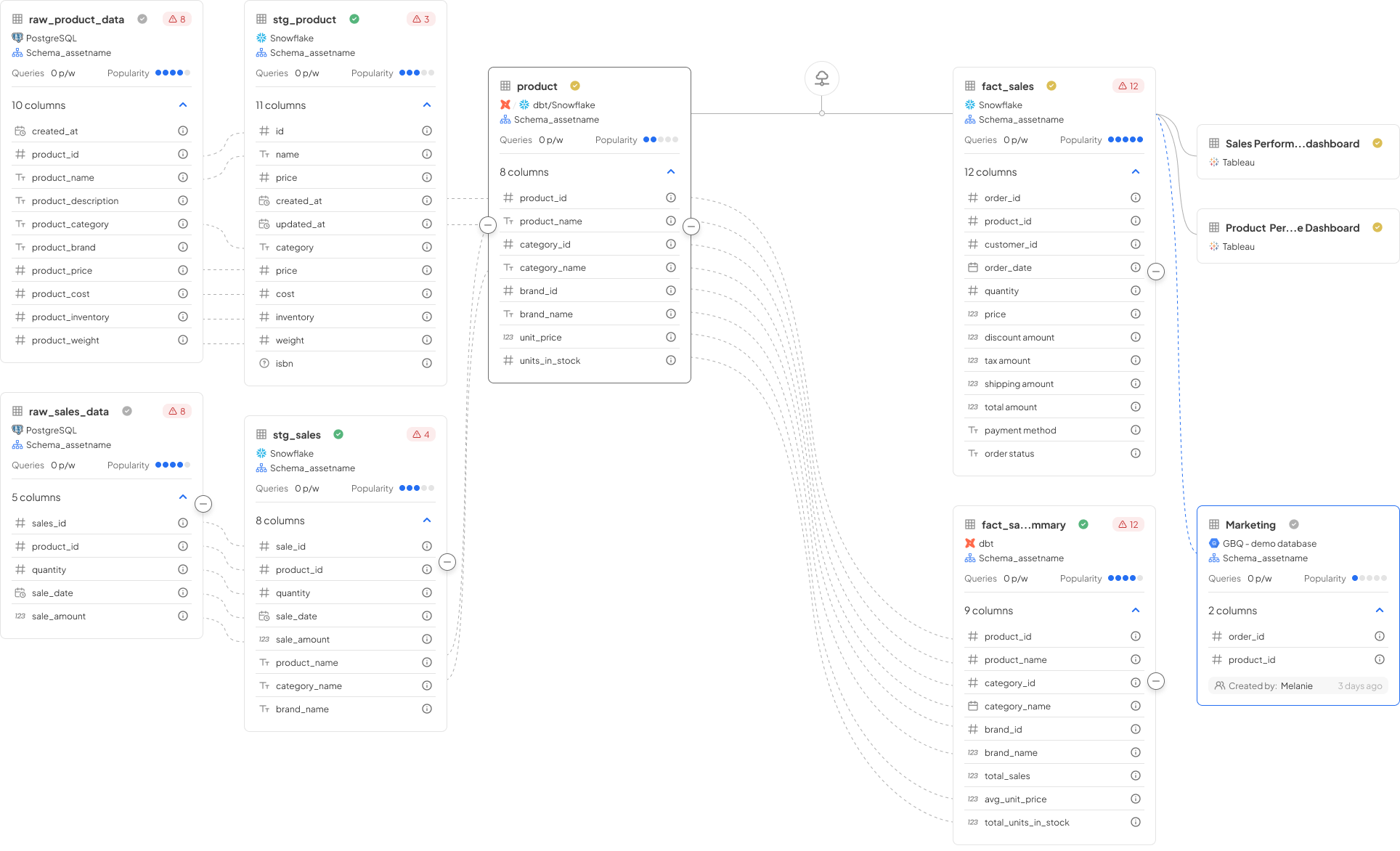

Decube: A Comprehensive Platform for Database Schema Management

Decube offers a robust platform designed for managing information structures, seamlessly integrating visibility, oversight, and advanced machine learning capabilities. Key features, including automated information management, real-time monitoring, and comprehensive lineage mapping, are crucial for maintaining high quality and ensuring compliance with standards such as SOC 2 and GDPR. By leveraging Decube, engineers can create a well-organized database schema example that aligns with organizational policies, thus enhancing decision-making processes and operational efficiency.

The impact of information observability on quality is significant; organizations that implement strong observability practices experience notable improvements in data integrity and reliability. Furthermore, as compliance requirements evolve, the ability to automate management processes becomes increasingly vital, allowing teams to focus on strategic initiatives rather than manual oversight. Real-world examples demonstrate how a database schema example of automated information governance can streamline database management, reduce errors, and foster a culture of accountability within analytics-driven enterprises.

Star Schema: Optimizing Data Warehousing with Simplified Structures

The star structure serves as a fundamental information modeling method in warehousing, characterized by a central fact table linked to multiple dimension tables. This design significantly enhances query performance by minimizing the number of joins required, which is crucial for efficient information retrieval. For example, in a retail information warehouse, a star structure may feature a fact table for sales transactions connected to dimension tables for products, customers, and time. This configuration not only accelerates query execution but also streamlines the reporting process for analysts, allowing them to extract insights more effectively.

Recent evaluations indicate that employing a star structure can lead to query performance improvements ranging from 25% to 50%, contingent on the warehouse environment. Best practices for star structure design in 2026 highlight the importance of maintaining denormalized dimensions to promote faster queries and ensuring compatibility with BI tools, which is vital for supporting analytics and reporting requirements in retail contexts. Furthermore, leveraging Amazon Redshift's managed, scalable, columnar storage can enhance the performance of star structure implementations, enabling organizations to efficiently manage large volumes of information.

By integrating the star structure within modern Lakehouse architectures, particularly in the Gold layer of Medallion Architecture, organizations can bolster their information management strategies, ultimately driving improved business outcomes.

Snowflake Schema: Enhancing Data Integrity through Normalization

The snowflake schema enhances the star schema by normalizing dimension tables into multiple related tables, which significantly reduces redundancy and improves data integrity. For example, in a financial services database, customer information can be systematically organized into distinct tables for personal details, account types, and transaction history. This structured approach minimizes repetition and ensures adherence to information management standards, as updates to dimension attributes occur in a single location, thereby enhancing overall accuracy.

With Decube's unified information trust platform, organizations can utilize automated column-level lineage to visualize data flow across components, which deepens their understanding of integrity within the snowflake schema. Additionally, Decube's automated crawling function ensures that metadata is efficiently managed and maintained, further supporting governance efforts. Although the increased number of joins may complicate queries, the benefits of a well-structured and efficient information model are considerable.

Organizations that adopt the snowflake structure have reported improvements in information integrity, with studies indicating that normalization can reduce anomalies by as much as 30%. As we move through 2026, the trend toward normalization in database design continues to gain momentum, which can be seen in a database schema example, reflecting an increasing focus on information quality and governance, further bolstered by Decube's advanced observability and governance features.

Hierarchical Schema: Structuring Data with Natural Relationships

A hierarchical framework organizes information in a tree-like structure, where each record has one parent and potentially several children. This model is particularly effective in representing information with clear hierarchical relationships, such as organizational structures or product categories. For example, a company may implement a database schema example that is hierarchical to manage employee information, linking each employee record to a specific department. This structure simplifies retrieval for hierarchical queries and enhances clarity in organization.

However, as organizations evolve, challenges related to flexibility and scalability can arise, especially when adapting to changes in organizational structure or incorporating new information sources. In 2026, information engineers face the ongoing challenge of maintaining effective hierarchical structures while ensuring adaptability to the dynamic nature of contemporary business environments.

Typical applications of hierarchical structures include:

- Managing employee information

- Classifying products in e-commerce

- Organizing geographic information

Despite their advantages, hierarchical schemas can complicate information management, particularly when updating or removing records, as these actions may require significant structural adjustments.

Relational Model: Building Connections Between Data Tables

The relational model organizes information into tables, referred to as relations, which can be interconnected through foreign keys. This design not only facilitates complex queries but also upholds integrity by enforcing relationships between tables. For example, in a customer relationship management (CRM) system, distinct tables for customers, orders, and products can be established, with foreign keys linking orders to both customers and products. Such a framework simplifies information retrieval and strengthens management practices, ensuring that details remain consistent and precise throughout the system.

Current best practices emphasize normalization to minimize redundancy and maintain integrity. Trends indicate a growing reliance on relational databases for structured information management across various industries, including finance and e-commerce. In this context, Decube's unified information trust platform enhances governance by providing automated column-level lineage and an ideal blend of Catalog and Observability modules. This capability enables organizations to trace the complete journey of information across systems, enhancing quality and compliance while fostering collaboration between business and technical teams, ensuring that information remains a reliable asset for informed decision-making.

Flat Model: Simplifying Data Structures for Specific Use Cases

The flat model organizes information into a single table, where each record stands independently and is readily accessible. This structure proves particularly beneficial for small datasets or applications that do not necessitate complex relationships. For example, a flat file system can efficiently store a list of contacts, with each contact's information represented in a single row. This simplicity allows for quick implementation and management.

However, as the volume of information increases, the flat model may become inefficient, presenting challenges in scalability and performance. By 2026, the limitations of flat file systems are increasingly apparent, especially for larger collections that require intricate relationships and robust querying capabilities. Despite these challenges, flat file databases continue to be widely used in contact management systems, where their straightforward design effectively meets the needs of smaller applications without the complexities associated with more advanced database structures.

Network Model: Navigating Complex Data Relationships

The network model is particularly effective in illustrating complex relationships among information entities, especially in facilitating many-to-many connections. This structure is invaluable in contexts where information points are interrelated, such as in social networks and supply chain management. For example, in a social media application, a network model can clearly depict the interactions among users, posts, and comments, enabling multiple users to engage with various posts simultaneously.

While this model provides significant flexibility in information representation, it also introduces complexities in query design and information management. Therefore, establishing robust governance practices is essential to maintain information integrity and enhance performance. As organizations increasingly adopt network models, understanding these dynamics is crucial for effectively navigating the complexities of contemporary information environments.

NoSQL Schema Design: Embracing Flexibility in Data Management

NoSQL structure design emphasizes adaptability and scalability, making it particularly suitable for storing unstructured or semi-structured information. Unlike conventional relational systems, NoSQL solutions accommodate a variety of information models, including document, key-value, and graph structures. For example, a document storage system can save user profiles in JSON format, which serves as a database schema example that allows for swift schema adjustments as application requirements evolve. This adaptability is essential for applications that require rapid iterations, such as social media platforms that manage vast amounts of user-generated content.

However, this flexibility necessitates robust governance practices to ensure quality and compliance, especially as organizations expand their operations. In 2026, best practices for NoSQL systems underscore the importance of ensuring high availability and eventual consistency. Features such as built-in replication and sharding are utilized to effectively manage the dynamic characteristics of unstructured information.

Documentation: Ensuring Clarity and Collaboration in Schema Design

Proper documentation of a database schema example is crucial for ensuring clarity and fostering collaboration among information teams. This involves maintaining comprehensive information dictionaries, entity-relationship diagrams (ERDs), and detailed descriptions of the database schema example along with their interconnections. Tools such as SchemaCrawler, MySQL Workbench, and Lucidchart can significantly streamline the documentation process, facilitating team members' understanding of the schema's purpose and functionality.

Efficient documentation not only aids in onboarding new team members but also supports adherence to governance standards by providing a clear and accessible record of structures and their intended uses. Advanced solutions like Decube can further enhance this process. Decube's preset field monitors allow teams to select which fields to observe, while its reconciliation features help identify discrepancies between datasets.

Moreover, Decube's machine learning-driven assessments for information quality automatically establish thresholds for table tests, ensuring that the documentation reflects the most current conditions. Intelligent alerts keep teams informed of quality issues without overwhelming them with notifications, thus allowing them to focus on essential updates.

Regular updates to documentation are vital to accurately represent any structural modifications, ensuring that all stakeholders have access to precise and current information. By leveraging Decube's capabilities, organizations can improve their metadata management and compliance, ultimately enhancing information observability and governance.

Common Pitfalls: Avoiding Mistakes in Database Schema Design

Creating efficient database structures requires careful attention to common pitfalls that can lead to inefficiencies and complications. A primary error is the neglect of normalization, which is crucial for maintaining data integrity and performance. For example, overloading a single table with excessive information or complicating the structure with unnecessary joins can significantly impair query performance and complicate data management. A case study on poor data structure illustrates how a lack of normalization can result in data inconsistency and increased development costs.

Moreover, failing to establish proper relationships between tables can cause confusion and errors. This is evident in cases where businesses utilize inappropriate primary keys, such as email addresses, which can complicate matters when changes occur. Data engineers must also be wary of hardcoding values and failing to document structural changes, as these practices can obscure the design intent and lead to future errors.

Implementing best practices, such as conducting regular structure reviews and maintaining clear documentation, can greatly improve the effectiveness of database designs. By prioritizing normalization and ensuring that structures are flexible enough to adapt to changing business needs, engineers can develop robust frameworks that not only enhance performance but also adhere to governance standards.

Decube's automated crawling capability addresses these challenges by ensuring that metadata is refreshed automatically, allowing engineers to maintain accurate and up-to-date structures without manual intervention. This feature helps mitigate issues related to normalization and schema relationships by providing real-time insights into structures. Furthermore, a structured approach to data contracts promotes collaboration among stakeholders and enhances data quality, which is essential for navigating the complexities faced by data engineers in 2026.

Conclusion

In conclusion, the exploration of various database schema examples underscores the diverse strategies that data engineers can employ to enhance their data management practices. Each schema type - be it star, snowflake, hierarchical, or NoSQL - offers distinct advantages and challenges that can profoundly influence an organization's capacity to manage and leverage data effectively.

Key insights indicate that meticulous schema design is crucial for improving data integrity, query performance, and operational efficiency. For example, the star schema enables quicker data retrieval in warehousing environments, whereas the snowflake schema enhances data accuracy through normalization. Furthermore, the adaptability of NoSQL structures meets the dynamic requirements of contemporary applications, highlighting the necessity of flexibility in database management.

As organizations grapple with the intricacies of data governance and compliance, adopting best practices in schema design becomes essential. Utilizing platforms like Decube can facilitate these initiatives, offering powerful tools for automated management, observability, and documentation. Ultimately, prioritizing effective database schema design not only elevates data quality and accessibility but also empowers teams to make informed decisions that propel business success. By embracing these principles, organizations can ensure that data remains a vital asset in an increasingly data-driven landscape.

Frequently Asked Questions

What is Decube and what are its key features?

Decube is a comprehensive platform for managing database schemas, offering features such as automated information management, real-time monitoring, and comprehensive lineage mapping. It helps ensure data quality and compliance with standards like SOC 2 and GDPR.

How does Decube improve decision-making and operational efficiency?

By leveraging Decube, engineers can create well-organized database schemas that align with organizational policies, which enhances decision-making processes and operational efficiency.

What impact does information observability have on data quality?

Strong observability practices lead to significant improvements in data integrity and reliability, allowing organizations to maintain high-quality data.

Why is automation in information management becoming vital?

As compliance requirements evolve, automating management processes allows teams to focus on strategic initiatives rather than manual oversight, which is increasingly important for maintaining data governance.

What is a star schema and how does it optimize data warehousing?

A star schema is an information modeling method characterized by a central fact table linked to multiple dimension tables, enhancing query performance by reducing the number of joins needed for efficient information retrieval.

What are the performance improvements associated with using a star schema?

Employing a star schema can lead to query performance improvements ranging from 25% to 50%, depending on the warehouse environment.

What best practices should be followed for star schema design in 2026?

Best practices include maintaining denormalized dimensions for faster queries and ensuring compatibility with BI tools to support analytics and reporting.

How does the snowflake schema differ from the star schema?

The snowflake schema enhances the star schema by normalizing dimension tables into multiple related tables, which reduces redundancy and improves data integrity.

What are the benefits of adopting a snowflake schema?

Organizations that adopt the snowflake schema have reported improvements in information integrity, with normalization reducing anomalies by as much as 30%.

How does Decube support the governance of the snowflake schema?

Decube's unified information trust platform provides automated column-level lineage visualization and efficient metadata management, which supports governance efforts in maintaining data integrity.

List of Sources

- Decube: A Comprehensive Platform for Database Schema Management

- 130+ Compliance Statistics & Trends to Know for 2026 (https://secureframe.com/blog/compliance-statistics)

- Decube raises US$3 mil to build context layer powering enterprise AI (https://digitalnewsasia.com/startups/decube-raises-us3-mil-build-context-layer-powering-enterprise-ai)

- Star Schema: Optimizing Data Warehousing with Simplified Structures

- fivetran.com (https://fivetran.com/blog/star-schema-vs-obt)

- medium.com (https://medium.com/@gema.correa/star-schema-data-modeling-why-it-still-matters-and-how-it-compares-to-snowflake-81790c420f73)

- Star Schema: Still Relevant Almost 30 years Later? (https://iterationinsights.com/article/star-schema-still-relevant-almost-30-years-later-2)

- Snowflake Schema: Enhancing Data Integrity through Normalization

- Snowflake expands open data strategy with Iceberg V3 support and governance portability plan - SiliconANGLE (https://siliconangle.com/2026/04/08/snowflake-expands-open-data-strategy-iceberg-v3-support-governance-portability-plan)

- Data Platform News (March 2026) (https://linkedin.com/pulse/data-platform-news-march-2026-pawel-potasinski-tr9af)

- Understanding Snowflake Schema: A Guide for Data Modeling (https://owox.com/blog/articles/snowflake-schema-data-modeling)

- Data Governance in 2026: A Reality Check and Blueprint for Success (https://medium.com/@sdezoysa/data-governance-a-reality-check-and-a-blueprint-for-2026-1801c5a475ea)

- 11 Impressive Benefits That Explain What is a Snowflake Schema (https://brollyacademy.com/what-is-a-snowflake-schema)

- Hierarchical Schema: Structuring Data with Natural Relationships

- Hierarchy is Good. Hierarchy is Essential. And Less Isn’t Always Better (https://stvp.stanford.edu/news/hierarchy-good-hierarchy-essential-and-less-isnt-always-better)

- These are a few of my favorite quotes - about organizations! - Organization (re)Design (https://organizationdesign.net/these-are-a-few-of-my-favorite-quotes-about-organizations.html)

- Advantages And Disadvantages Of The Hierarchal Database | Cram (https://cram.com/essay/Advantages-And-Disadvantages-Of-The-Hierarchal-Database/FKTX2ZLG6EEX)

- Hierarchical vs Relational Data Models: A Comprehensive Guide (https://datamation.com/big-data/hierarchical-vs-relational-data-models)

- Hierarchical database model - Wikipedia (https://en.wikipedia.org/wiki/Hierarchical_database_model)

- Relational Model: Building Connections Between Data Tables

- What is a Relational Data Model? (https://databricks.com/blog/what-is-a-relational-data-model)

- Relational Model in DBMS - GeeksforGeeks (https://geeksforgeeks.org/dbms/relational-model-in-dbms)

- Relational Database: Structure, Benefits, and Key Use Cases (https://acceldata.io/blog/what-is-a-relational-database-architecture-features-and-real-world-applications)

- Flat Model: Simplifying Data Structures for Specific Use Cases

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Quotes Related to Data and Data Governance (https://blog.idatainc.com/quotes-related-to-data-and-data-governance)

- 100 Essential Data Storytelling Quotes (https://effectivedatastorytelling.com/post/100-essential-data-storytelling-quotes)

- Network Model: Navigating Complex Data Relationships

- Social Network Analysis — A Mini Case Study (https://medium.com/converge-perspectives/social-network-analysis-a-mini-case-study-2c4153d96540)

- Modeling data flows in a complex stakeholder network - a case study on autonomous public transportation | Proceedings of the Design Society | Cambridge Core (https://cambridge.org/core/journals/proceedings-of-the-design-society/article/modeling-data-flows-in-a-complex-stakeholder-network-a-case-study-on-autonomous-public-transportation/404EEFBD197E2F695E0D11CE4B766917)

- Journalism in 2026: Key trends from Nieman Lab’s annual prediction series - iMEdD Lab (https://lab.imedd.org/en/journalism-in-2026-key-trends-from-nieman-labs-annual-prediction-series)

- Understanding Many-to-Many Relationships in Databases (https://medium.com/@boneshendry/understanding-many-to-many-relationships-in-databases-d52c3fe64ad4)

- What is a Network Data Model? Examples, Pros and Cons (https://datamation.com/big-data/what-is-a-network-data-model-examples-pros-and-cons)

- NoSQL Schema Design: Embracing Flexibility in Data Management

- NoSQL for Social Media Data Processing | PDF | No Sql | Databases (https://scribd.com/document/933247930/case-st)

- Documentation: Ensuring Clarity and Collaboration in Schema Design

- Top 10 Database Schema Design Best Practices (https://bytebase.com/blog/top-database-schema-design-best-practices)

- Importance of Documentation - Expert and Influential Leader Quotes : Erase Your Risk with Proper Documentation (https://forensicnotes.com/importance-of-documentation-expert-and-influential-leader-quotes)

- Best Practices For Documenting Database Design - GeeksforGeeks (https://geeksforgeeks.org/dbms/best-practices-for-documenting-database-design)

- Documenting database schemas (https://docsgeek.io/blog/posts/documenting-db-schemas.html)

- Database Schema Design: Key Concepts and Best Practices – Database Guide | SolarWinds (https://solarwinds.com/database-optimization/database-schema-design)

- Common Pitfalls: Avoiding Mistakes in Database Schema Design

- 10 Common Mistakes in Database Design (And How to Avoid Them) (https://chartdb.io/blog/common-database-design-mistakes)

- dataversity.net (https://dataversity.net/articles/putting-a-number-on-bad-data)

- Case Studies On NoSQL (https://meegle.com/en_us/topics/nosql/case-studies-on-nosql)

- The Cost of Poor Database Design (https://coderbased.com/p/the-cost-of-poor-database-design)

_For%20light%20backgrounds.svg)