Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding Data Product Management: Definition, Evolution, and Impact

Discover the essentials of data product management, its evolution, and its significant impact.

Introduction

Data product management has become an essential discipline in today’s information-driven landscape, where organizations increasingly depend on data to make informed decisions. This article explores the definition, evolution, and impact of data product management, emphasizing its critical role in ensuring data quality and strategic alignment within businesses.

As the demand for skilled data product managers rises, organizations encounter various challenges in effectively implementing data product management practices. This article will also discuss how these strategies can be leveraged to enhance operational efficiency and drive success.

Define Data Product Management

involves the strategic oversight of developing, deploying, and managing the lifecycle of data-centric offerings within an organization. This discipline is essential for ensuring that information offerings not only meet organizational requirements but also uphold high-quality standards and deliver actionable insights. A (DPM) plays a crucial role in by serving as a vital link between technical teams and business stakeholders, facilitating effective communication and alignment on information initiatives. DPMs are tasked with outlining requirements, prioritizing features, and ensuring that information offerings are valuable, user-friendly, and seamlessly integrated into organizational workflows.

As reliance on information for decision-making increases, the role of (DPM) has become increasingly critical, with 79% of executives recognizing that is essential to company success. Furthermore, the market for is expected to grow significantly, reflecting the rising demand for qualified professionals who can navigate the complexities of information-driven environments. Organizations that successfully implement , supported by Decube's unified trust platform, are better positioned to enhance and drive informed decision-making, ultimately leading to improved business outcomes.

A key component of this process is the use of , which serve as . These enable teams to quickly locate, understand, and rely on the correct information, thereby and governance. Ethical considerations in information service oversight are also gaining importance, as (DPM) professionals are expected to ensure responsible use of information and compliance with regulations. The competitive nature of the job market is evident, with the average time to hire for PM roles being 45-60 days, indicating a .

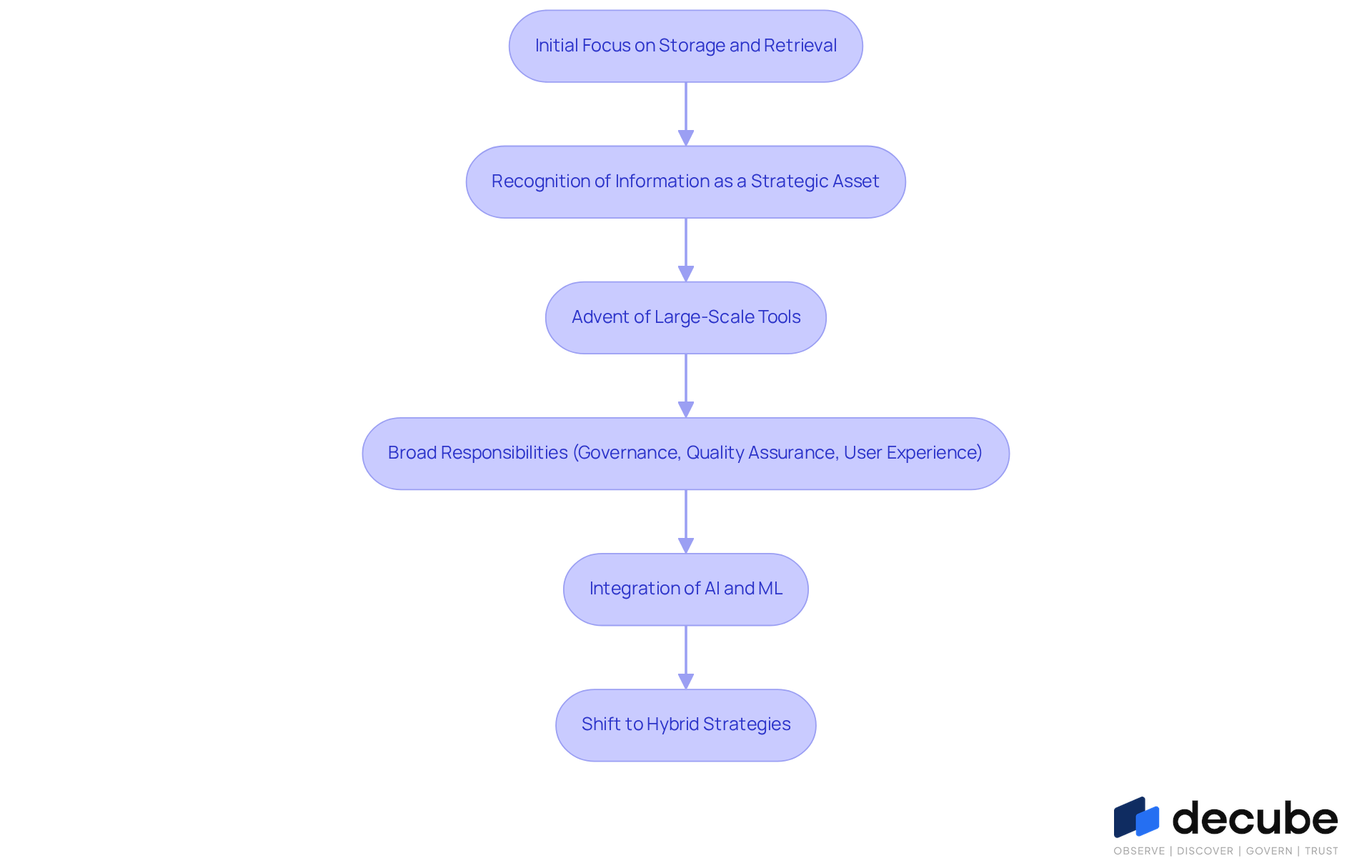

Explore the Evolution of Data Product Management

The evolution of underscores the growing importance of insights in business decision-making. Initially, the focus was on information storage and retrieval. However, as organizations recognized information as a strategic asset, the role of information managers became essential. The early 2000s marked the advent of large-scale information and analytics tools, which required a more structured approach to managing information offerings.

Today, oversight of encompasses a broad array of responsibilities, including , , and user experience design. With Decube's , organizations can now benefit from seamless , as it refreshes information automatically once sources are connected, thereby eliminating the need for manual updates. This functionality enhances and , allowing information managers to control access and modifications through a defined approval process.

The integration of has significantly transformed this landscape, empowering managers to leverage and development. Notably, a survey revealed that 98% of participants have modified or plan to modify their team structures in response to AI, highlighting its profound impact on product roles.

Moreover, organizations are increasingly embracing hybrid, platform-oriented strategies for , moving away from traditional centralized systems. For instance, companies like Roche have consolidated their CRM systems to improve customer analytics and facilitate AI adoption, illustrating the shift towards more sophisticated practices.

As enterprises continue to refine their information strategies, the focus on becomes critical, ensuring that information serves as a reliable foundation for strategic insights and operational efficiency.

Identify Key Responsibilities and Characteristics of Data Product Managers

Data managers are pivotal in shaping the vision and strategy of . Their responsibilities encompass outlining the direction of these offerings, collaborating with cross-functional teams, and ensuring the quality and compliance of information. Effective information managers exhibit , enabling them to dissect complex datasets and derive actionable insights. Furthermore, is vital, as they bridge the gap between technical teams and organizational stakeholders, ensuring alignment with the objectives of the offering.

A deep understanding of both commercial and technical aspects is essential for product managers in data product management within the information sector. They must and business objectives while adeptly managing stakeholder expectations. Familiarity with is crucial, as it empowers them to implement best practices for and .

Statistics indicate that only 5% of managers possess coding skills; however, a basic understanding of coding can significantly enhance collaboration with development teams. Additionally, research shows that managers allocate 52% of their time to unplanned 'fire-fighting' activities, highlighting the necessity for tools that streamline administrative tasks and allow them to focus on strategic initiatives. The evolving landscape of information service oversight underscores the importance of continuous education and networking, as these elements are vital for maintaining competitiveness in a rapidly changing industry.

Understand the Importance and Impact of Data Product Management

is essential for organizations aiming to enhance the value of their information resources. By focusing on the design, reliability, and alignment of information offerings with organizational goals, managers facilitate informed decision-making and . Effective oversight of information items leads to significant improvements in quality, with information-driven teams being 2.9 times more likely to achieve their objectives. This strategic approach not only enhances but also increases agility in responding to market dynamics.

Organizations that prioritize , such as Schmitz Cargobull, which utilizes analytics for customer classification, gain a competitive edge by transforming information into a strategic asset. Moreover, companies that implement a can anticipate:

- Reduced delays

- across departments

This ultimately fosters innovation and drives business success.

Conclusion

In conclusion, understanding data product management is essential for organizations seeking to fully leverage their information assets. This discipline not only governs the development and lifecycle of data-centric offerings but also ensures alignment with business objectives, delivering valuable insights. As the demand for effective data management escalates, the role of data product managers becomes increasingly critical in bridging the divide between technical capabilities and business requirements.

The article has examined key facets of data product management, tracing its evolution from basic information storage to a sophisticated strategic function that encompasses governance, quality assurance, and user experience design. The responsibilities of data product managers, marked by their analytical acumen and effective communication with diverse teams, underscore the significance of their role in ensuring compliance and fostering innovation. Furthermore, successful organizations illustrate how prioritizing data management can enhance operational efficiency and provide a competitive advantage in the marketplace.

In a context where data is a vital asset, adopting effective data product management practices is not merely optional but essential for success. Organizations are urged to invest in this discipline, cultivating their teams and utilizing advanced technologies to improve decision-making and operational agility. By doing so, they can convert their information into a strategic asset, ultimately nurturing a culture of innovation and responsiveness to market dynamics.

Frequently Asked Questions

What is data product management?

Data product management involves the strategic oversight of developing, deploying, and managing the lifecycle of data-centric offerings within an organization, ensuring they meet requirements, uphold quality standards, and deliver actionable insights.

What role does a data product manager (DPM) play?

A data product manager serves as a link between technical teams and business stakeholders, facilitating communication and alignment on information initiatives, outlining requirements, prioritizing features, and ensuring offerings are valuable and user-friendly.

Why is data product management becoming increasingly critical?

As reliance on information for decision-making increases, 79% of executives recognize effective data product management as essential to company success, leading to a growing market for qualified professionals in this field.

How do catalogs contribute to data product management?

Catalogs serve as searchable inventories of assets enriched with metadata, allowing teams to quickly locate, understand, and rely on the correct information, thus enhancing observability and governance.

What are the ethical considerations in data product management?

Data product management professionals are expected to ensure responsible use of information and compliance with regulations, highlighting the importance of ethical oversight in information services.

What does the job market look like for data product managers?

The job market for data product managers is competitive, with an average time to hire for PM roles being 45-60 days, indicating strong demand for qualified DPMs.

List of Sources

- Define Data Product Management

- Managing Data Products for Reliability and Efficiency | Acceldata (https://acceldata.io/blog/data-product-management)

- Product Management Statistics - 50+ Insights for Product Managers (https://uxcam.com/blog/product-management-statistics)

- The Data Product Manager: Skills, Responsibilities, and the Future of Data-Driven Value Creation (https://linkedin.com/pulse/data-product-manager-skills-responsibilities-future-bastos-de-moraes-afgdc)

- 50+ Product Management Statistics for 2026 [Updated Data] (https://ideaplan.io/blog/product-management-statistics-2026)

- 36 Product Management Statistics That Will Make You Rethink Your 2024 Strategy (https://haveignition.com/industry-guides/336-product-management-statistics-that-will-make-you-rethink-your-2024-strategy)

- Explore the Evolution of Data Product Management

- The Evolution of Data Products: Trends Every Product Manager Should Know (https://crosschannelrecruitment.com/the-evolution-of-data-products-trends-every-product-manager-should-know)

- cio.com (https://cio.com/article/4117094/data-management-trends-whats-in-whats-out.html)

- The New Reality of AI in Product Management | Productboard (https://productboard.com/blog/ai-in-product-management-report)

- Evolution of Data Management — Jeff Winter (https://jeffwinterinsights.com/insights/evolution-of-data-management)

- Big Data News | CIO Dive (https://ciodive.com/topic/big-data)

- Identify Key Responsibilities and Characteristics of Data Product Managers

- montecarlodata.com (https://montecarlodata.com/blog-what-good-data-product-managers-do-and-why-you-probably-need-one)

- 13 Surprising Stats About Product Management (And What They Actually Mean for You) (https://airfocus.com/blog/surprising-product-management-stats)

- Data Product Manager: Role, Skills & How to Master Them (https://productschool.com/blog/career-development/data-product-manager)

- datascience-pm.com (https://datascience-pm.com/data-product-manager)

- Data-driven PM: Key metrics for product managers (https://statsig.com/perspectives/data-driven-pm-key-metrics-for-product-managers)

- Understand the Importance and Impact of Data Product Management

- 36 Product Management Statistics That Will Make You Rethink Your 2024 Strategy (https://haveignition.com/industry-guides/336-product-management-statistics-that-will-make-you-rethink-your-2024-strategy)

- prnewswire.com (https://prnewswire.com/news-releases/data-as-a-product-approach-improves-value-delivery-for-organizations-says-info-tech-research-group-302517864.html)

- Data as a guide: New white paper shows how data-based product management makes companies fit for the future - it's OWL (https://its-owl.de/en/news-events/news/data-as-a-guide-new-white-paper-shows-how-data-based-product-management-makes-companies-fit-for-the-future)

- How a Data Product Strategy Impacts Business and Tech Teams (https://themoderndatacompany.com/blog/how-a-data-product-strategy-impacts-both-business-and-tech-stakeholders)

- The missing data link: Five practical lessons to scale your data products (https://mckinsey.com/capabilities/tech-and-ai/our-insights/the-missing-data-link-five-practical-lessons-to-scale-your-data-products)

_For%20light%20backgrounds.svg)