Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Cloud Migration Data: Best Practices for Success

Master best practices for successful cloud migration data and overcome common challenges.

Introduction

Cloud migration is fundamentally transforming organizational operations, allowing businesses to leverage the advantages of scalable and flexible cloud-based environments. As companies pursue enhanced efficiency and collaboration, it becomes crucial to understand best practices for managing data during cloud migration. Despite the potential for improved performance and cost savings, organizations frequently encounter significant challenges that can hinder their migration efforts.

What strategies can be implemented to ensure a seamless transition while maintaining data integrity and compliance?

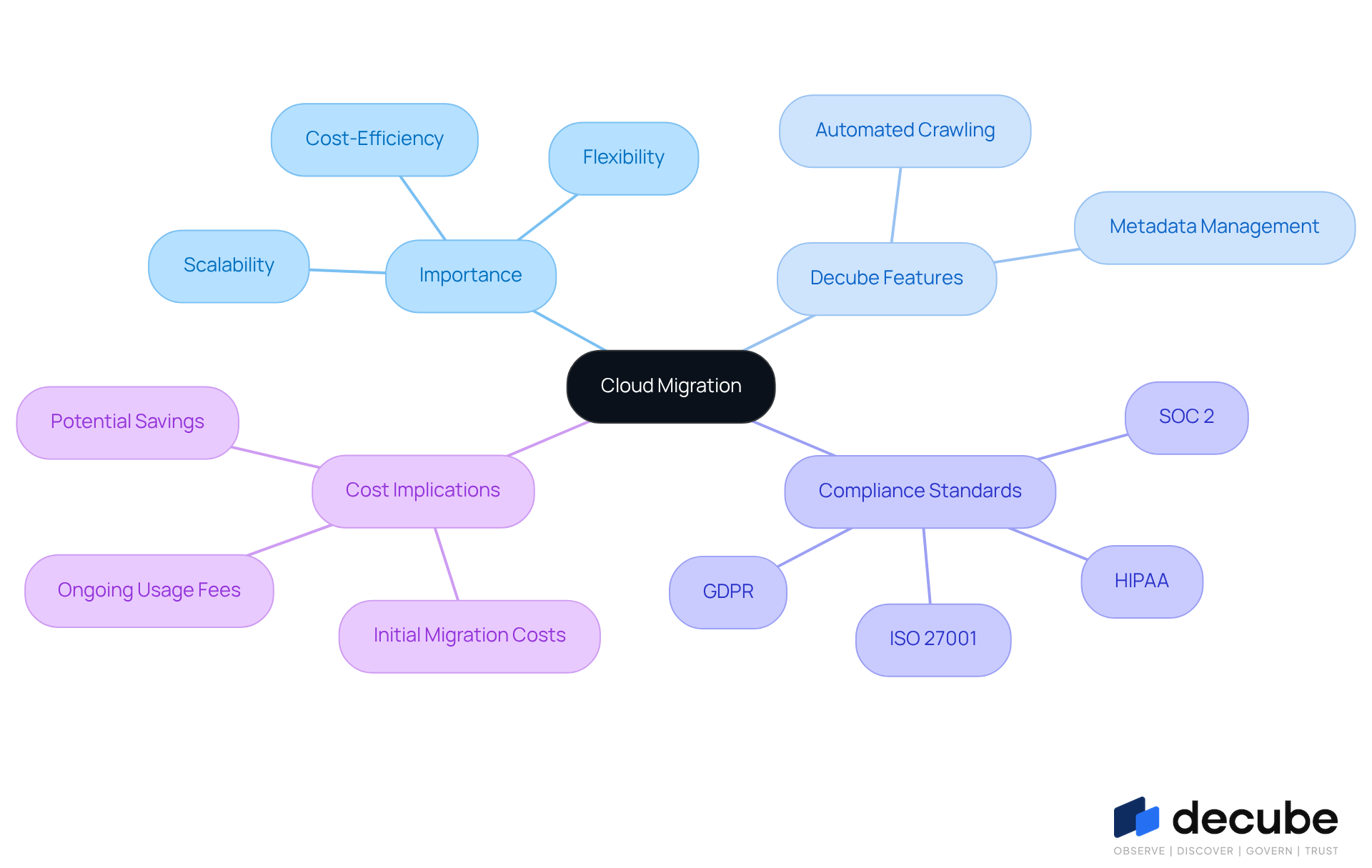

Define Cloud Migration and Its Importance

Cloud migration data refers to the process of transferring information, applications, and other business elements from on-premises infrastructure to cloud-based environments. This transition is crucial for organizations aiming to leverage the scalability, flexibility, and cost-efficiency offered by cloud migration data and computing services. As businesses increasingly rely on data-driven decision-making, moving to the cloud facilitates enhanced information accessibility and collaboration across teams.

Decube's automated crawling feature offers organizations seamless metadata management, ensuring that information remains continuously updated without the need for manual intervention. This capability not only improves information observability and governance but also empowers teams to manage access permissions through a designated approval flow, thereby enhancing operational efficiency and compliance.

Moreover, ensuring that cloud migration data is handled properly during the transition supports adherence to evolving industry standards such as:

- SOC 2

- ISO 27001

- HIPAA

- GDPR

This allows organizations to maintain data integrity and security. It is important to consider that implementing Decube's data trust platform may result in a slight increase in online infrastructure costs, typically around 2-3%, primarily due to the additional data scans conducted by the automated crawling feature.

By 2026, adopting online services is anticipated to be a fundamental business decision impacting performance, security, and scalability, rather than merely a technical enhancement.

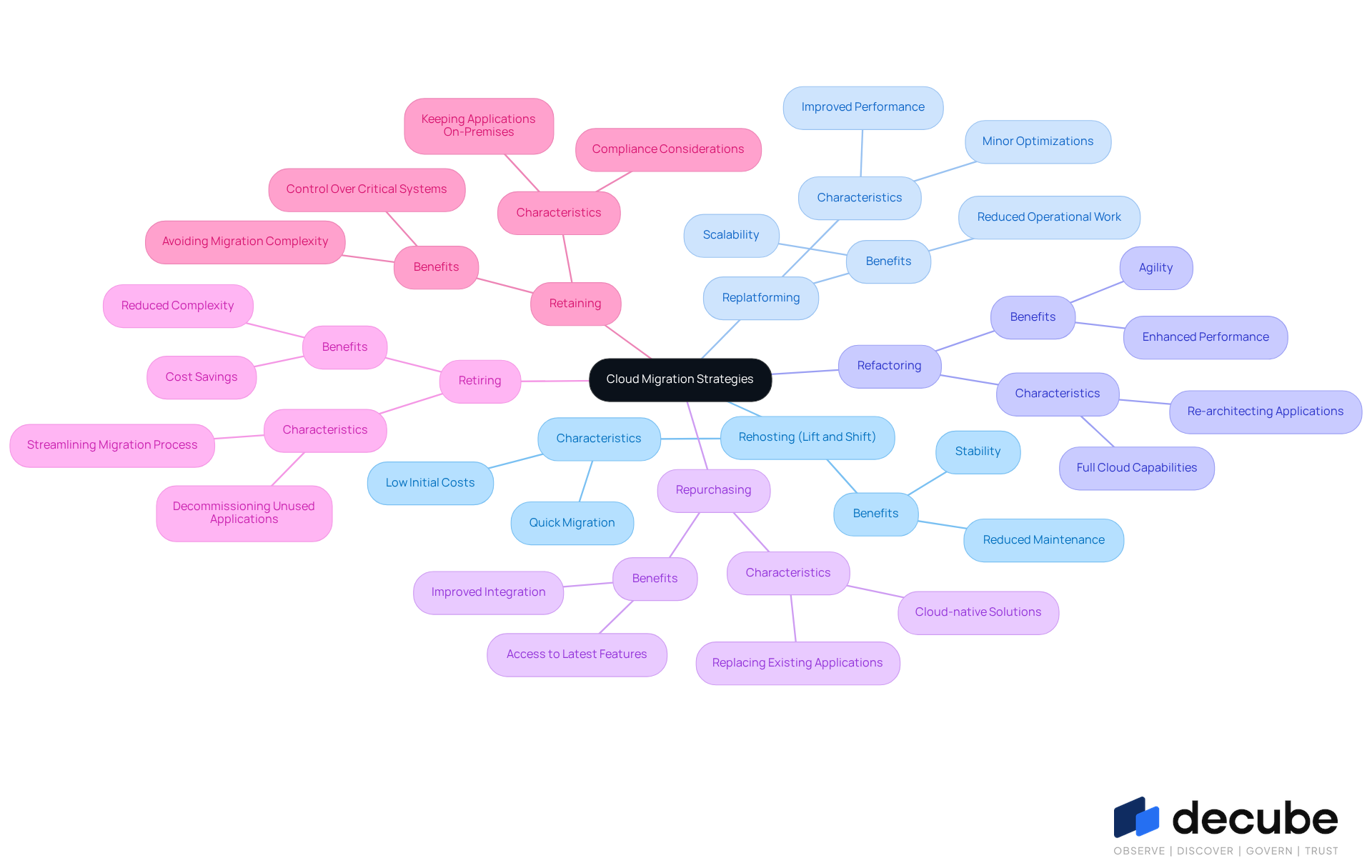

Explore Cloud Migration Strategies and Approaches

Organizations can adopt several cloud migration strategies, each with distinct characteristics and benefits:

- Rehosting (Lift and Shift): This method involves relocating applications without significant modifications, making it a swift option for transitioning to the cloud environment.

- Replatforming: This strategy allows for some optimization of applications during the transition, striking a balance between speed and efficiency.

- Refactoring: This approach entails re-architecting applications to fully leverage cloud capabilities, which can enhance performance but requires additional time and resources.

- Repurchasing: This strategy involves replacing existing applications with cloud-native solutions, often resulting in improved integration and functionality.

- Retiring: Identifying and decommissioning applications that are no longer necessary can streamline the migration process.

- Retaining: Certain applications may need to remain on-premises due to compliance or performance considerations.

Selecting the appropriate strategy for managing cloud migration data hinges on various factors, including business objectives, application dependencies, and compliance requirements.

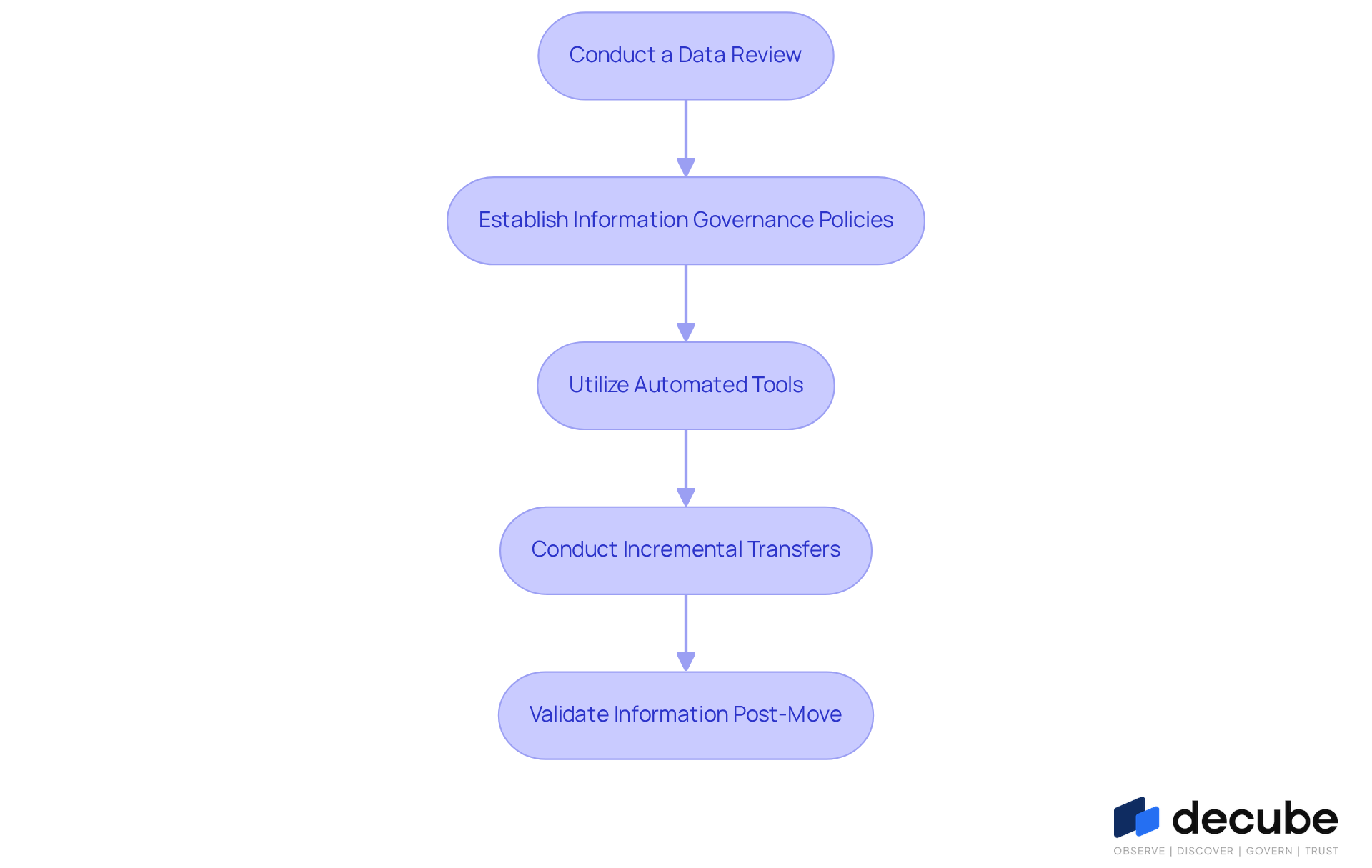

Implement Best Practices for Data Integrity in Migration

To ensure data integrity during cloud migration, organizations should adopt the following best practices:

- Conduct a Data Review: Before migration, it is essential to evaluate the current condition of information to identify any inconsistencies or quality issues. Utilizing ML-powered tests, which include 12 accessible test types such as null% regex_match and cardinality, can assist in automating this process, ensuring that cloud migration data quality is monitored efficiently.

- Establish Information Governance Policies: Implementing policies that define ownership, access controls, and compliance requirements is crucial for maintaining integrity. The platform's automated crawling feature enhances governance by automatically refreshing cloud migration data and regulating access, ensuring that only authorized individuals can view or modify information.

- Organizations should utilize automated tools that provide real-time tracking and verification of cloud migration data throughout the transfer process. With intelligent notifications, the platform ensures that issues are detected early, thereby reducing the risk of quality problems.

- Conduct Incremental Transfers: Instead of transferring all information simultaneously, organizations should consider incremental transfers to mitigate risks and facilitate easier troubleshooting. The platform's seamless integration with existing information stacks supports the management of cloud migration data, simplifying quality management during the transfer.

- Validate Information Post-Move: After the transfer, it is imperative to perform comprehensive checks to confirm that information has been accurately shifted and is functioning as expected. Decube's extensive information observability features provide transparency into pipelines, fostering collaboration among teams and ensuring that information remains accurate and consistent.

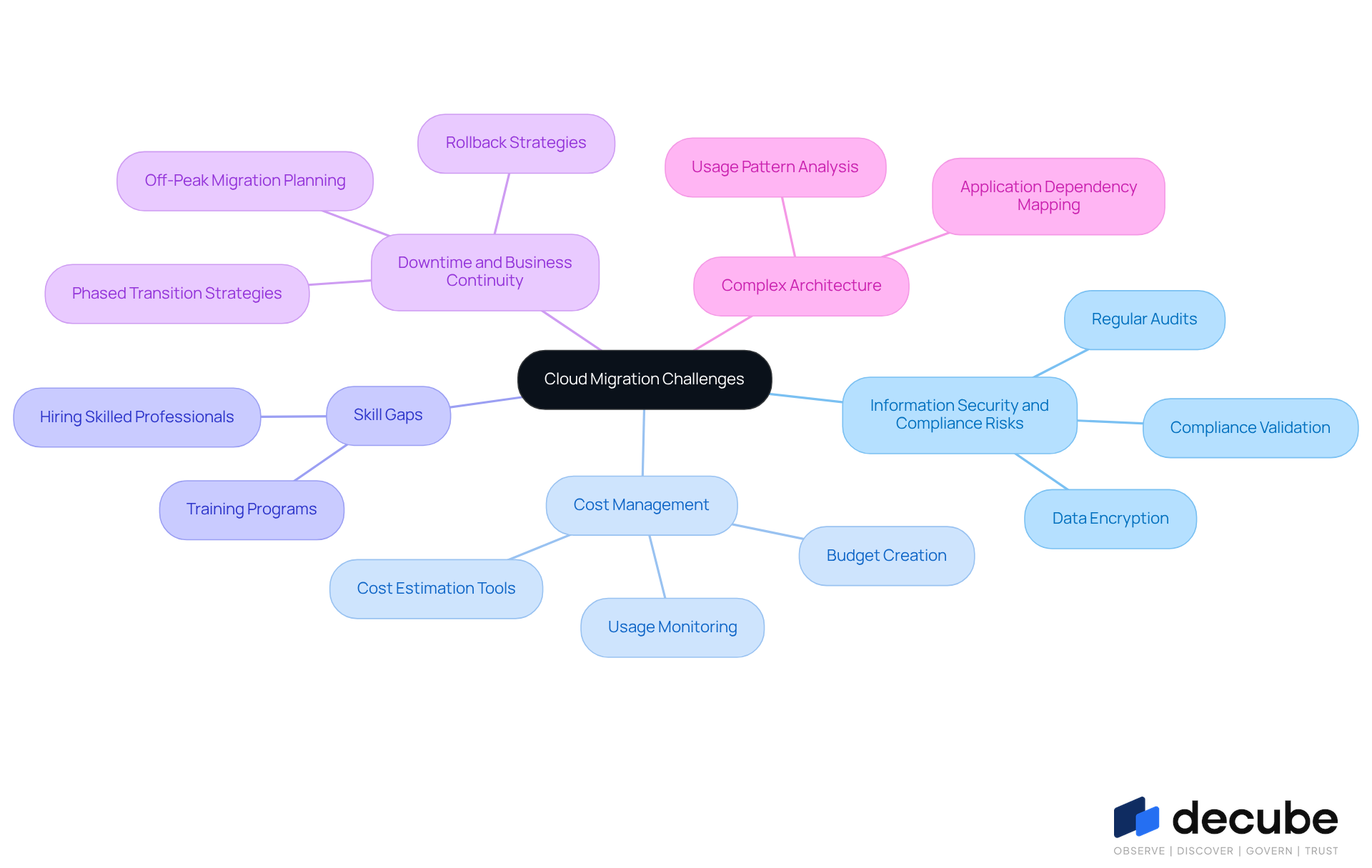

Identify and Overcome Common Cloud Migration Challenges

Organizations frequently encounter significant challenges during cloud migration, including:

- Information Security and Compliance Risks: Safeguarding sensitive information and adhering to regulatory obligations is essential. As of 2026, the regulatory landscape has intensified, making it crucial for organizations to implement robust security measures, such as data encryption and compliance validation. Regular audits are necessary to mitigate risks associated with data exposure and breaches.

- Cost Management: Unexpected expenses can derail relocation budgets. Industry leaders emphasize the importance of creating a clear budget and utilizing sophisticated online transfer solutions that encompass cost estimation and usage monitoring. This proactive approach helps organizations avoid inflated costs, which can exceed initial estimates by 10-15% due to factors like over-provisioning and integration challenges.

- Skill Gaps: The complexity of online technologies often leaves organizations with inadequate expertise, obstructing transition efforts. Investing in training programs and hiring skilled professionals can bridge this gap, ensuring that teams are equipped to handle the intricacies of cloud environments effectively.

- Downtime and Business Continuity: Minimizing downtime is critical for maintaining operational integrity. Organizations should plan transitions during off-peak hours and develop rollback strategies to ensure business continuity. Phased transition strategies, as demonstrated by Donegal Group, enable modernization without significant disruption.

- Complex Architecture: Legacy systems may pose integration challenges with cloud environments. Conducting thorough assessments of application dependencies and usage patterns can facilitate smoother transitions for cloud migration data, allowing organizations to identify necessary adjustments and avoid performance issues during migration.

The platform has proven to be a valuable solution in addressing these challenges. As noted by Vikram Y., a DevOps expert, 'This is a package of solutions for us.' He stated, 'We were struggling to find one good tool that we can integrate with our existing information stack using MySQL. After implementing the new system, both our work and information quality have enhanced considerably. I highly recommend it!' This endorsement underscores the company's role in improving information quality and integration, establishing it as a crucial resource for organizations managing cloud migration data. Furthermore, Decube's automated crawling feature ensures that once sources are connected, the cloud migration data is auto-refreshed, further enhancing data management during the migration process.

Conclusion

Embracing cloud migration transcends a mere technical upgrade; it represents a strategic decision that can significantly enhance an organization's efficiency, flexibility, and cost-effectiveness. Transitioning to cloud-based environments allows businesses to harness the power of data-driven insights, streamline operations, and foster collaboration among teams. The significance of adopting robust cloud migration practices cannot be overstated, as they establish the foundation for successful integration and management of cloud resources.

This article has highlighted key strategies and best practices to ensure a smooth transition. From selecting the appropriate migration approach-whether rehosting, replatforming, or refactoring-to implementing stringent data governance and integrity measures, each step is crucial in mitigating risks associated with cloud migration. Addressing common challenges such as security compliance, cost management, and skill gaps further underscores the necessity for a well-considered migration plan. Utilizing tools like Decube's automated crawling feature can streamline processes and enhance data management during this critical transition.

Ultimately, the journey to the cloud is not solely about technology; it is about positioning organizations for future success. As businesses continue to evolve in an increasingly digital landscape, prioritizing effective cloud migration strategies becomes essential. Organizations are encouraged to take proactive steps, invest in training, and embrace innovative solutions to navigate the complexities of cloud migration. By doing so, they can unlock the full potential of their data, ensuring they remain competitive and responsive in a rapidly changing environment.

Frequently Asked Questions

What is cloud migration?

Cloud migration refers to the process of transferring information, applications, and other business elements from on-premises infrastructure to cloud-based environments.

Why is cloud migration important for organizations?

Cloud migration is important because it allows organizations to leverage scalability, flexibility, and cost-efficiency, enhances information accessibility, and improves collaboration across teams.

What features does Decube offer to assist with cloud migration?

Decube offers an automated crawling feature that provides seamless metadata management, ensuring information is continuously updated without manual intervention, improving observability, governance, and operational efficiency.

How does Decube's platform enhance compliance during cloud migration?

Decube's platform helps manage access permissions through a designated approval flow and supports adherence to industry standards such as SOC 2, ISO 27001, HIPAA, and GDPR, maintaining data integrity and security.

What are the potential costs associated with implementing Decube's data trust platform?

Implementing Decube's data trust platform may lead to a slight increase in online infrastructure costs, typically around 2-3%, due to additional data scans conducted by the automated crawling feature.

What is the future outlook for cloud services adoption by 2026?

By 2026, adopting online services is expected to be a fundamental business decision that impacts performance, security, and scalability, rather than just a technical enhancement.

List of Sources

- Define Cloud Migration and Its Importance

- Cloud Migration in 2026: The 3 Critical Questions Every Business Leader Must Answer (https://vc3.com/blog/cloud-migration-critical-questions-every-business-leader-must-answer)

- Why 2026 Is The Year Cloud Migration Accelerates With Confidence (https://forbes.com/councils/forbestechcouncil/2026/02/23/why-2026-is-the-year-cloud-migration-accelerates-with-confidence)

- The State of Cloud in 2026: From Adoption to Optimization | IP Pathways (https://ippathways.com/the-state-of-cloud-in-2026-from-adoption-to-optimization)

- What Cloud Migration Looks Like for Businesses in 2026 (https://computersolutionseast.com/blog/cloud-solutions/cloud-migration-2026-guide)

- Explore Cloud Migration Strategies and Approaches

- Why 2026 Is The Year Cloud Migration Accelerates With Confidence (https://forbes.com/councils/forbestechcouncil/2026/02/23/why-2026-is-the-year-cloud-migration-accelerates-with-confidence)

- 2026 Hybrid Cloud Migration Strategy: The Ultimate Guide - Top Mobile App Development Company in USA | Custom Apps (https://nextolive.com/blogs/2026-hybrid-cloud-migration-strategy-the-ultimate-guide)

- Top 10 Cloud Migration Strategies for Enterprise IT in 2026 (https://ledgesure.com/blog/top-10-cloud-migration-strategies-to-modernize-enterprise-workloads-in-2026)

- Cloud Migration in 2026 What's Changed and Why It's Easier Now (https://linkedin.com/pulse/cloud-migration-2026-whats-changed-why-its-easier-now-piecyfer-ui8ff)

- 7 Rs of Cloud Migration Every Tech Leader Should Know (https://tenupsoft.com/blog/7-rs-of-cloud-migration-strategies.html)

- Implement Best Practices for Data Integrity in Migration

- Reduce Data Risks in Cloud Migration: Best Practices & Insights (https://appmaisters.com/reduce-data-issues-during-cloud-migration)

- Protecting Data Integrity in Cloud Migration Services (https://seasiainfotech.com/blog/data-integrity-in-cloud-migration-services)

- Cloud Migration Statistics: Key Trends, Challenges, and Opportunities in 2025 (https://duplocloud.com/blog/cloud-migration-statistics)

- Cloud Migration Statistics for 2026 (https://auvik.com/franklyit/blog/cloud-migration-statistics)

- Top 10 Data Migration Risks and How to Avoid Them in 2026 (https://medium.com/@kanerika/top-10-data-migration-risks-and-how-to-avoid-them-in-2026-fb5dc93c12f5)

- Identify and Overcome Common Cloud Migration Challenges

- Cloud Migration Challenges and Solutions in 2026 | A Practical Guide (https://amvionlabs.com/blogs/cloud-migration-challenges-solutions-2026)

- Top Challenges in Cloud Migrations, and How Experts Solve Them (https://linkedin.com/pulse/top-challenges-cloud-migrations-how-experts-solve-dlfdf)

- Cloud Migration in 2026: Strategies, Challenges, and Future Trends (https://sesamedisk.com/cloud-migration-2026-strategies-trends)

_For%20light%20backgrounds.svg)