Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Master Data Pipeline Architecture: Best Practices for Engineers

Explore best practices for building efficient and scalable data pipeline architecture.

Introduction

A well-structured data pipeline architecture serves as the backbone of modern data-driven organizations, facilitating a seamless flow of information from diverse sources to actionable insights. Engineers who adhere to established best practices can optimize this architecture, thereby enhancing efficiency, scalability, and data quality.

However, given the complexities of evolving data environments, organizations must consider how to ensure their pipelines not only meet current demands but also adapt to future challenges.

This article explores the essential components, effective design patterns, and governance strategies that empower engineers to master data pipeline architecture and drive informed decision-making.

Understand Core Components of Data Pipeline Architecture

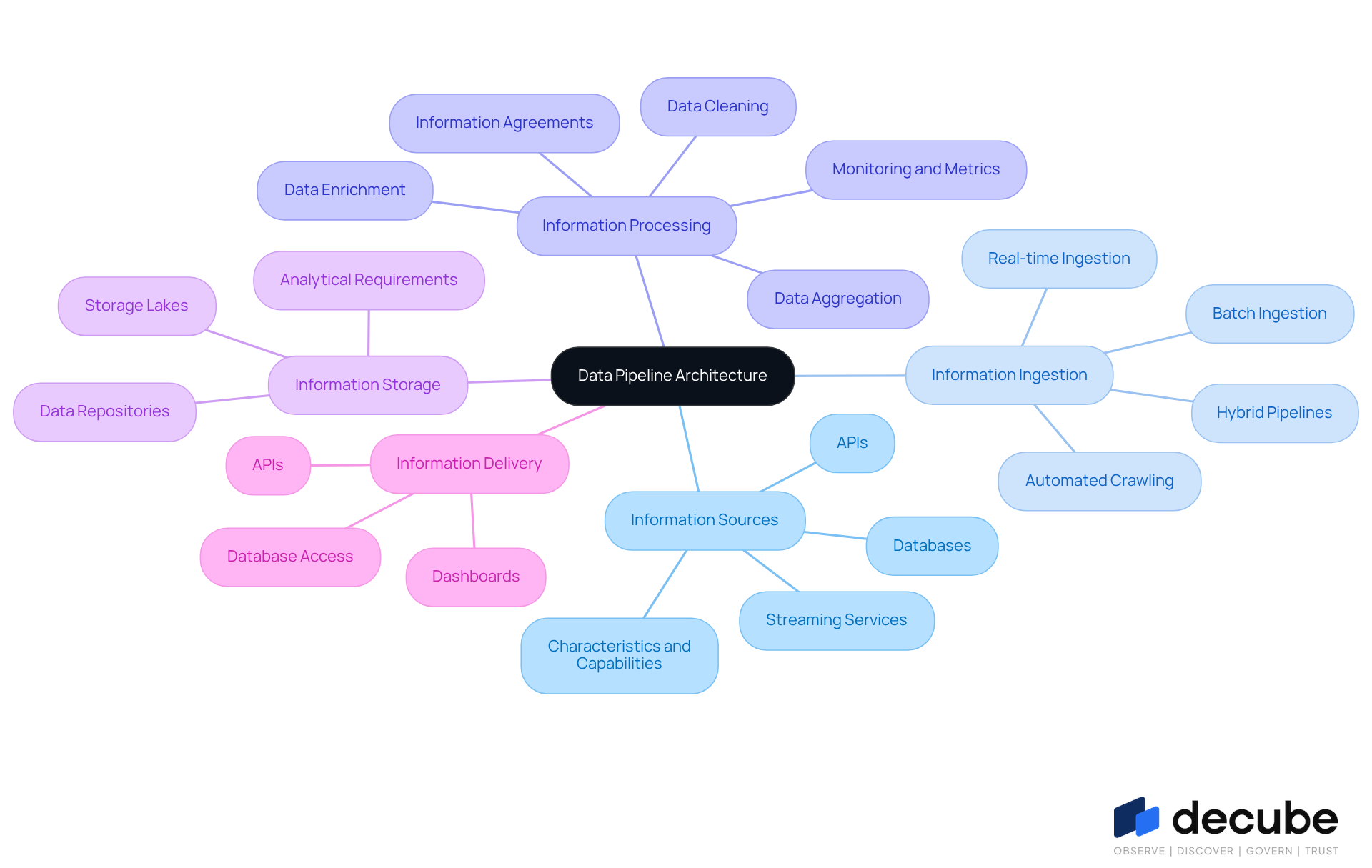

A robust data pipeline architecture consists of several essential components that work together to facilitate the seamless flow of information from source to destination. These components include:

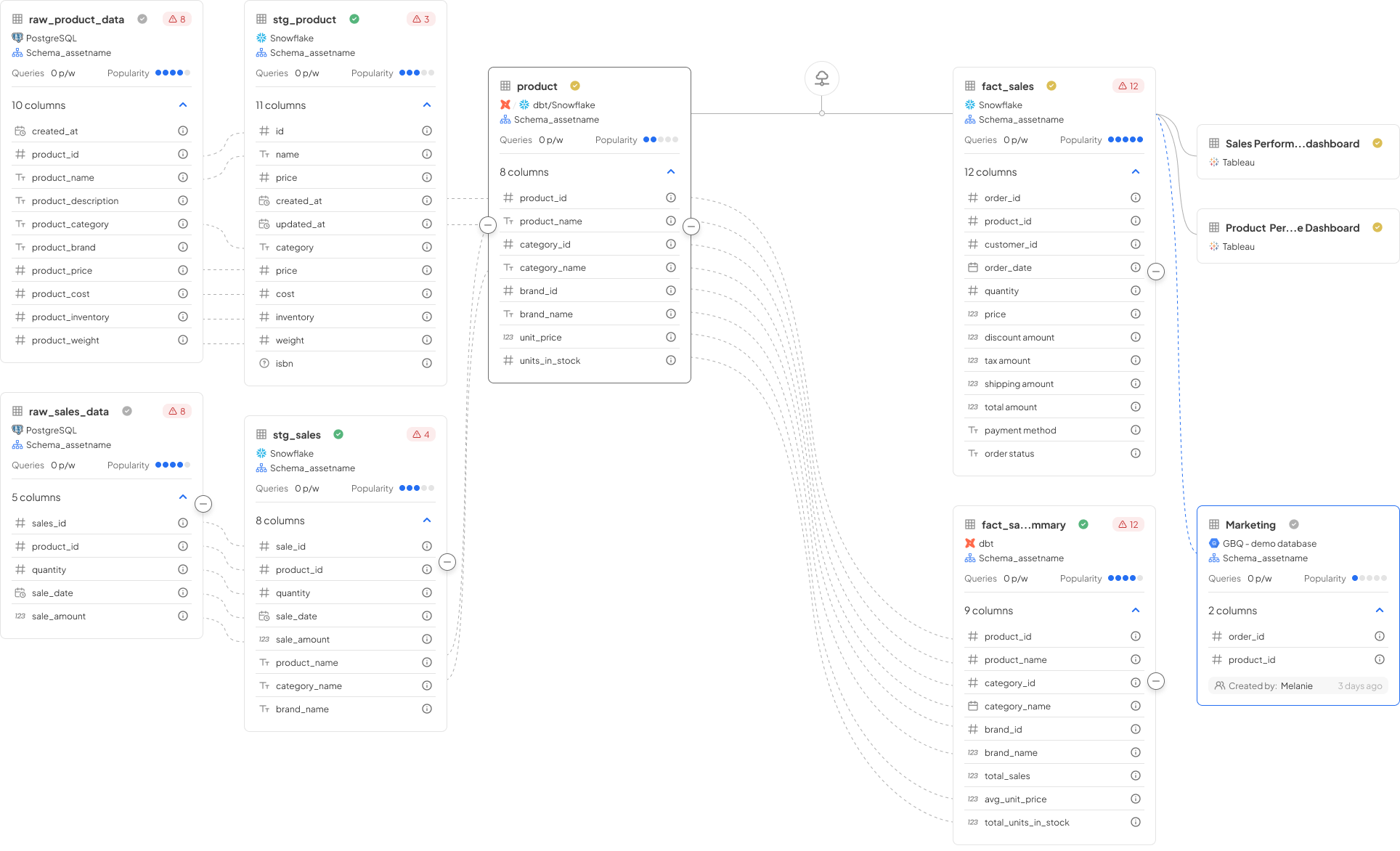

- Information Sources: These are the origin points of information, encompassing databases, APIs, and streaming services. Understanding the characteristics and capabilities of these sources is crucial for efficient information ingestion, as they directly influence the quality and reliability of the content being processed.

- Information Ingestion: This process involves gathering information from diverse sources, which can occur in real-time or in batches, depending on specific use cases. Organizations are increasingly adopting hybrid pipelines that integrate both batch and streaming methods to enhance reporting and operational workflows. With Decube's automated crawling feature, metadata is auto-refreshed once sources are connected, significantly streamlining the ingestion process and ensuring that information remains current and relevant.

- Information Processing: Converting raw information into a usable format is essential. Techniques such as cleaning, enriching, and aggregating information ensure that it is accurate and actionable. Effective monitoring during this stage is vital, as it helps track metrics like information drift and schema evolution, which can impact quality. Establishing information agreements during this phase fosters collaboration among stakeholders, ensuring that information products are trustworthy resources that meet organizational standards.

- Information Storage: After processing, information must be stored efficiently to allow for quick retrieval and analysis. This may involve employing storage lakes for unstructured information or repositories for structured analytics, ensuring that the architecture accommodates various analytical requirements.

- Information Delivery: The final step involves making processed information accessible to end-users or applications. This can be achieved through APIs, dashboards, or direct database access, enabling timely insights that drive decision-making.

By thoroughly understanding these elements, engineers can create information flows that are not only efficient and scalable but also capable of handling the complexities of contemporary information environments. As noted by industry specialists, a well-organized information flow is crucial for organizations aiming to leverage insights for strategic decision-making and operational effectiveness. Furthermore, the global information flow market is projected to expand at a CAGR of 19.9%, reaching $43.61 billion by 2032, highlighting the growing significance of robust data pipeline architecture.

Choose Effective Design Patterns for Scalability and Efficiency

Choosing the appropriate design patterns is essential for constructing a scalable and efficient data pipeline architecture. Below are several effective patterns to consider:

- Batch Processing: This pattern is ideal for scenarios where information can be processed in chunks at scheduled intervals, commonly utilized in ETL processes.

- Stream Processing: Suitable for real-time information processing, this approach allows for immediate insights and actions based on incoming data.

- Lambda Architecture: This architecture combines batch and stream processing, providing a comprehensive solution capable of managing both historical and real-time information.

- Microservices Architecture: By segmenting the system into smaller, independent services, this architecture enhances flexibility and maintainability, allowing for individual development, deployment, and scaling.

- Event-Driven Architecture: This approach leverages events to trigger information processing, fostering a reactive and adaptable system that can respond to fluctuations in information flow.

By implementing these design patterns, engineers can ensure their systems, particularly their data pipeline architecture, are not only efficient but also capable of scaling in accordance with the organization's evolving requirements.

Implement Best Practices for Data Quality and Governance

To ensure the reliability and integrity of information flows, it is essential to implement effective practices for information standards and governance. The following strategies are key:

- Information Verification: Implement validation checks at the source to guarantee accuracy and completeness before information enters the workflow. This includes schema validation and business rule checks, which are vital for maintaining high standards of information quality.

- Automated Quality Checks: Employ automated tools to continuously monitor information quality throughout the pipeline. This encompasses checks for duplicates, null values, and adherence to established information standards. Automated Data Metric Functions (DMFs) in platforms such as Snowflake facilitate these checks, ensuring that information remains consistent and reliable.

- Information Lineage Tracking: Ensure clear visibility of information flow through the pipeline. Understanding the origin of information and any modifications it undergoes is crucial for compliance and auditing. This practice aids organizations in tracing information back to its source and verifying its integrity.

- Policy Management: Develop and enforce governance policies that dictate the handling, storage, and access of information. This ensures compliance with regulations such as GDPR and HIPAA, which are increasingly significant as information volumes grow.

- Regular Audits: Conduct periodic evaluations of information integrity and governance practices to identify areas for improvement and ensure compliance with established standards. Frequent assessments help organizations maintain information integrity and compliance, reducing the risk of costly information-related issues, which can amount to millions annually.

By integrating these practices into the data pipeline architecture, organizations can significantly enhance information quality and uphold compliance, ultimately leading to more reliable, insight-driven decisions.

Establish Monitoring and Observability for Proactive Management

To ensure the health and performance of information flows, strong monitoring and observability practices are essential. Here are key strategies:

- Real-Time Monitoring: Utilize tools that provide real-time insights into system performance, enabling immediate detection of anomalies or failures. With 30 to 40 percent of information flows encountering failures weekly, real-time surveillance is crucial for reducing interruptions.

- End-to-End Visibility: Ensure monitoring covers all stages of the pipeline, from ingestion to delivery. This thorough perspective assists in recognizing obstacles and inefficiencies, highlighting that 68 percent of entities require four or more hours to detect quality issues.

- Alerting Mechanisms: Establish alerts for critical metrics such as latency, error rates, and throughput. This proactive strategy enables teams to react quickly to possible problems, particularly as companies encounter an average of 67 monthly information incidents needing approximately 15 hours to address.

- Performance Metrics: Define and track key performance indicators (KPIs) that reflect pipeline health. Metrics such as information freshness, completeness, and precision are essential for evaluating performance, especially since 77 percent of entities rate their information standard as average or lower.

- Automated Recovery: Implement self-healing mechanisms that can automatically resolve common issues, thereby reducing downtime and the need for manual intervention. This capability is becoming more crucial as companies indicate that engineers dedicate approximately 30 percent of their time managing disruptions.

By prioritizing these monitoring and observability strategies, organizations can ensure their data pipeline architecture operates smoothly, maintaining high data quality and reliability.

Conclusion

A well-structured data pipeline architecture is essential for organizations seeking to leverage their information effectively. By grasping the core components - information sources, ingestion, processing, storage, and delivery - engineers can create systems that guarantee efficient and reliable data flows. The incorporation of effective design patterns, such as batch and stream processing, significantly boosts scalability and operational efficiency, enabling organizations to respond to changing data requirements.

Key insights from this discussion highlight the critical nature of data quality and governance practices. Implementing verification processes, automated quality checks, lineage tracking, and regular audits ensures that the information remains trustworthy and compliant with regulations. Furthermore, establishing robust monitoring and observability mechanisms facilitates proactive management, allowing organizations to swiftly address potential issues and sustain high performance.

Ultimately, the importance of mastering data pipeline architecture cannot be overstated. As the demand for reliable data continues to escalate, adopting these best practices will empower organizations to make informed decisions, drive strategic initiatives, and maintain competitiveness in an increasingly data-driven landscape. Embracing these principles not only enhances operational effectiveness but also positions organizations to thrive in the future of information management.

Frequently Asked Questions

What are the core components of data pipeline architecture?

The core components of data pipeline architecture include information sources, information ingestion, information processing, information storage, and information delivery.

What are information sources in the context of data pipelines?

Information sources are the origin points of information, which include databases, APIs, and streaming services. Their characteristics and capabilities are crucial for efficient information ingestion.

How does information ingestion work?

Information ingestion involves gathering information from various sources, which can be done in real-time or in batches. Organizations often use hybrid pipelines that combine both methods to enhance reporting and operational workflows.

What role does information processing play in a data pipeline?

Information processing is essential for converting raw information into a usable format. This includes techniques such as cleaning, enriching, and aggregating information to ensure accuracy and actionability.

Why is monitoring important during information processing?

Monitoring during information processing is vital as it helps track metrics like information drift and schema evolution, which can impact the quality of the information.

What is the purpose of information storage in a data pipeline?

Information storage is necessary for efficiently storing processed information to allow for quick retrieval and analysis. This can involve using storage lakes for unstructured information or repositories for structured analytics.

How is information delivered to end-users or applications?

Information delivery involves making processed information accessible through APIs, dashboards, or direct database access, enabling timely insights that support decision-making.

What is the significance of understanding data pipeline architecture?

Understanding data pipeline architecture helps engineers create efficient and scalable information flows capable of handling the complexities of modern information environments, which is crucial for strategic decision-making and operational effectiveness.

What is the projected growth of the global information flow market?

The global information flow market is projected to expand at a CAGR of 19.9%, reaching $43.61 billion by 2032, indicating the growing importance of robust data pipeline architecture.

List of Sources

- Understand Core Components of Data Pipeline Architecture

- 8+ Industry Leaders Building Data Engineering Pipelines in 2026 (https://datatobiz.com/blog/vendors-building-data-engineering-pipelines)

- Enterprise Data Architecture: A Complete 2026 Guide to Scalable, AI-Driven Systems (https://logiciel.io/blog/enterprise-data-architecture-2026-guide)

- Data Integration Best Practices for 2026: Architecture & Tools (https://domo.com/learn/article/data-integration-best-practices)

- 2026 Data Engineering Trends: Everyone's a Workflow Engineer Now | Kestra (https://kestra.io/blogs/2026-03-05-data-eng-trends-2026)

- 10 Data Engineering Trends to Watch in 2026 (https://medium.com/@inverita/10-data-engineering-trends-to-watch-in-2026-8b2ebe8ac5dc)

- Choose Effective Design Patterns for Scalability and Efficiency

- Building Data Pipelines 2026 (https://proxet.com/blog/building-data-pipelines-2026)

- Real-Time Data Pipelines: Top 5 Design Patterns You Should Know - Landskill (https://landskill.com/blog/real-time-data-pipelines-patterns)

- Designing Scalable Data Pipelines in 2026 | Graycell America (https://graycellamerica.com/designing-scalable-data-pipelines)

- Data Integration Best Practices for 2026: Architecture & Tools (https://domo.com/learn/article/data-integration-best-practices)

- Implement Best Practices for Data Quality and Governance

- Data Quality Statistics & Insights From Monitoring +11 Million Tables In 2025 (https://montecarlodata.com/blog-data-quality-statistics)

- The Role Of Data Governance In Meeting Evolving Privacy Regulations (https://forbes.com/councils/forbesbusinesscouncil/2025/05/05/the-role-of-data-governance-in-meeting-evolving-privacy-regulations)

- Ensure Data Quality By Using Data Governance Best Practices (https://consulting.sva.com/insights/ensure-data-quality-by-using-data-governance-best-practices)

- The Importance of Data Governance in Today's Business Environment (https://park.edu/blog/the-importance-of-data-governance-in-todays-business-environment)

- Data Quality in Snowflake: Best Practices for 2026 (https://integrate.io/blog/data-quality-in-snowflake-best-practices)

- Establish Monitoring and Observability for Proactive Management

- Observability Trends 2026 | IBM (https://ibm.com/think/insights/observability-trends)

- Data Pipeline Monitoring: Alternatives to Full-Stack Tools (https://acceldata.io/blog/alternatives-to-full-stack-monitoring-for-data-pipelines)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- The State of Observability in 2026: Why “almost observable” Isn’t Enough - DataBahn (https://databahn.ai/blog/the-state-of-observability-in-2026)

- Data Engineering Stats 2026: Latest Market Insights & Trends (https://data.folio3.com/blog/data-engineering-stats)

_For%20light%20backgrounds.svg)