Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding Data Quality Meaning: Importance for Data Engineers

Explore the significance of data quality meaning for effective decision-making in data engineering.

Introduction

In an era where data drives decision-making, the integrity of information is paramount. Understanding the meaning of data quality is essential for data engineers, who must navigate its multifaceted dimensions:

- Accuracy

- Completeness

- Consistency

- Timeliness

- Validity

to ensure reliable analytics. Organizations struggle to uphold data quality standards amidst evolving demands. Addressing these challenges is vital for improving data quality and achieving better business outcomes.

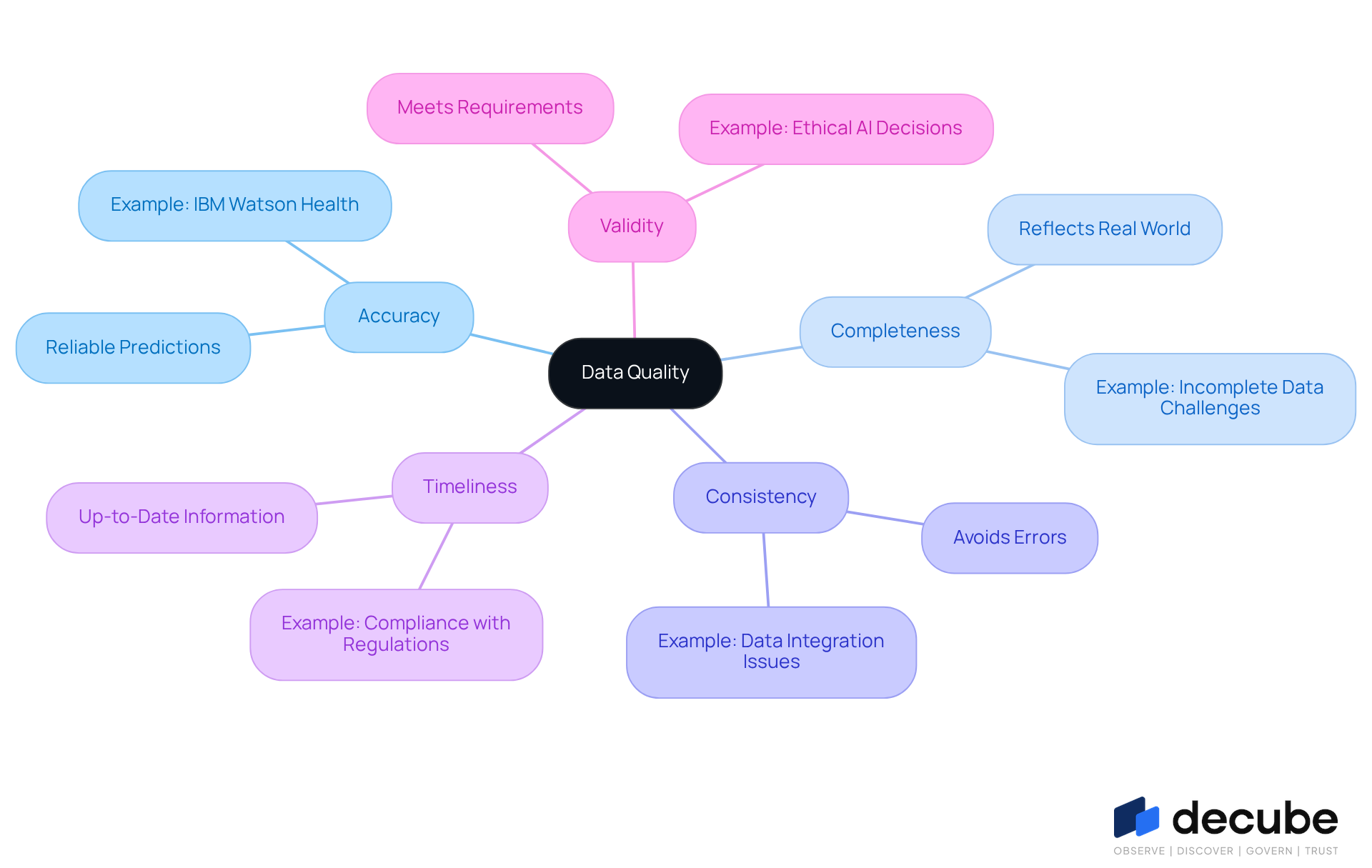

Define Data Quality: Understanding Its Core Meaning

The accuracy and reliability of information are increasingly critical in today's data-driven landscape. The concept of data quality meaning refers to how accurately, completely, consistently, and relevantly information serves its intended purpose. It encompasses several dimensions, including:

- Accuracy

- Completeness

- Consistency

- Timeliness

- Validity

High-quality information is crucial for effective decision-making, ensuring that insights from analysis are reliable and actionable. In the domain of information engineering, preserving information integrity is essential to avoid errors that can propagate through information pipelines, ultimately leading to flawed analytics and misguided business choices.

In the age of AI, maintaining information integrity has become increasingly vital. Organizations that emphasize information integrity are better positioned to leverage AI technologies effectively. For instance, a study revealed that 61% of companies recognize information integrity as a significant challenge, underscoring the need for robust governance frameworks to uphold standards of accuracy and completeness. Furthermore, Gartner forecasts that 60% of AI initiatives will be abandoned by 2026 due to inadequate information standards, highlighting the critical nature of this issue.

Decube enhances information quality through its integrated platform, which offers automated monitoring and governance features. Its automated crawling capability ensures that metadata is efficiently managed and kept up to date, allowing organizations to maintain accurate and consistent information without manual intervention. The lineage feature illustrates the entire information flow across components, providing clarity in information pipelines that not only fosters collaboration among teams but also bolsters the reliability of the information being utilized.

The dimensions of information quality are crucial in understanding data quality meaning for AI information management. For example, accuracy is essential for ensuring that AI models make reliable predictions, while completeness guarantees that datasets reflect the real world. Inconsistent information can lead to significant errors in AI outputs, as seen in cases where biased training sets have resulted in unfair decision-making processes. Organizations must implement strategies to address these issues, such as regular audits and validation procedures, to mitigate biases and enhance the overall quality of their information assets.

Practical examples further underscore the importance of information integrity. IBM Watson Health, for instance, achieved a 15% increase in precision for cancer diagnoses after refining its information management practices. This demonstrates how organized and well-maintained information can lead to improved outcomes in critical fields like healthcare. Similarly, companies that invest in enhancing information accuracy gain a competitive advantage, as they can rely on their information for informed decision-making and operational efficiency. Failing to prioritize information quality could lead organizations to miss critical opportunities in the evolving AI landscape.

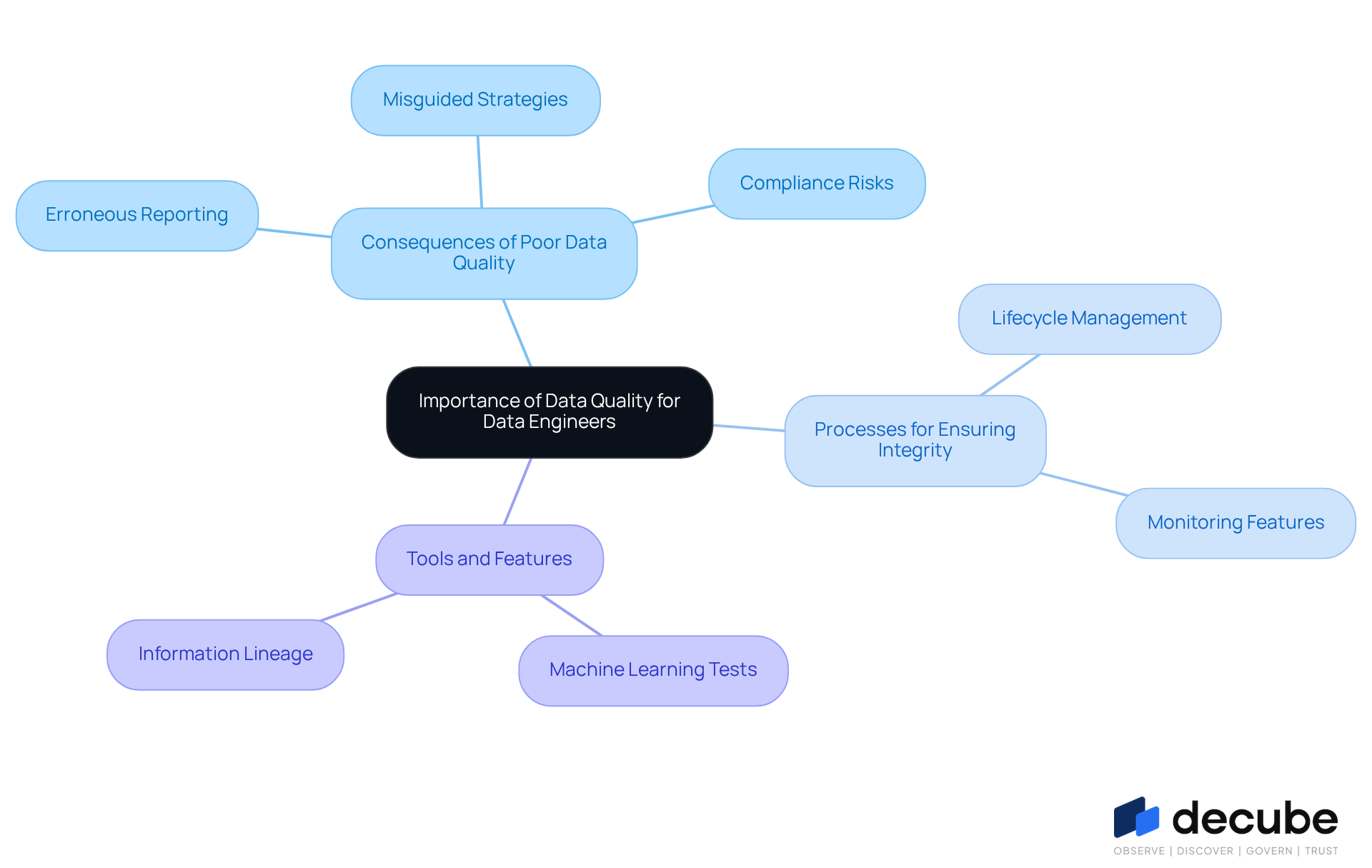

Explain the Importance of Data Quality for Data Engineers

For information engineers, ensuring information integrity is paramount, as it directly influences the reliability of information pipelines and the accuracy of analytics. Poor information standards lead to significant challenges that can undermine business success, including:

- Erroneous reporting

- Misguided business strategies

- Compliance risks

Information engineers are responsible for implementing processes that ensure information integrity throughout its lifecycle, from collection to analysis. Utilizing advanced monitoring features, such as machine learning-powered tests that automatically detect information integrity thresholds and intelligent alerts that minimize notification overload, engineers can significantly boost the effectiveness of machine learning models and enhance operational efficiency.

Additionally, Decube's information lineage features provide clear visibility into data flow, which is crucial for ensuring precision and compliance. In sectors such as financial services and telecommunications, where information-driven choices are critical, the pursuit of high information standards is essential for maintaining a competitive advantage.

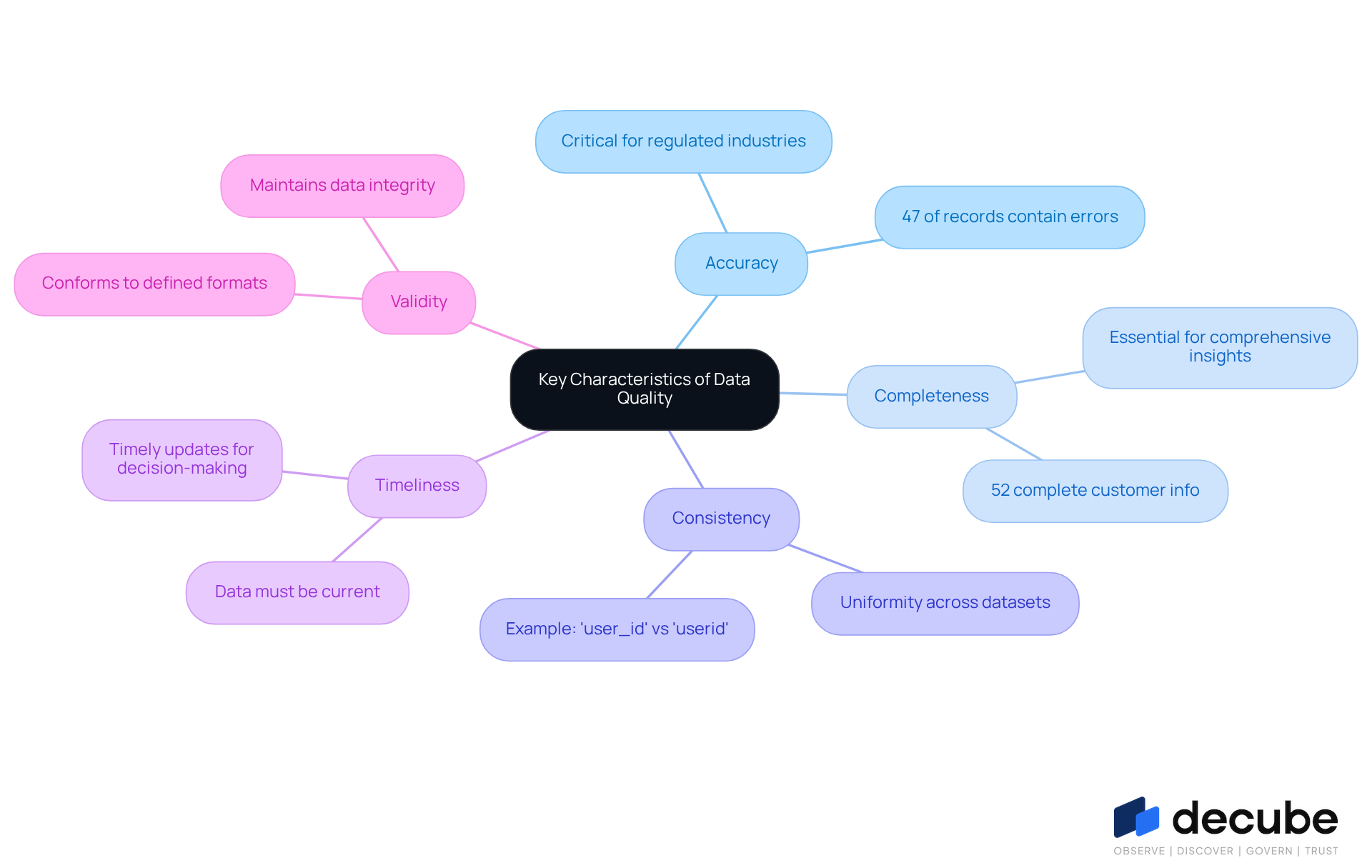

Identify Key Characteristics and Dimensions of Data Quality

Ensuring high-quality information is critical for effective decision-making, which reflects the data quality meaning in any organization. Key characteristics of data quality meaning encompass:

- Accuracy

- Completeness

- Consistency

- Timeliness

- Validity

Precision is vital, as it ensures that information accurately reflects real-world conditions. Notably, studies show that approximately 47% of newly created records contain at least one critical error. Completeness assesses the degree to which all necessary information points are available; for example, a 52% complete customer information set suggests reduced confidence in targeted campaigns.

Decube's automated crawling feature eliminates the need for manual metadata updates; once your sources are connected, the information is auto-refreshed, enhancing both accuracy and completeness. Consistency checks for uniformity across datasets, as inconsistencies can lead to confusion, such as using 'user_id' and 'userid' interchangeably. Timeliness evaluates if information is current and relevant, with timely updates being essential for effective decision-making. Validity ensures that information conforms to defined formats and standards, which is essential for maintaining integrity.

Additionally, Decube enables you to manage who can access or modify information via a specified approval process, promoting governance and improving information standards. Understanding these dimensions enables information engineers to implement targeted strategies for maintaining integrity, which relates to data quality meaning, such as profiling and validation checks. For instance, organizations can employ automated rules to oversee information integrity and swiftly detect mistakes, thereby improving overall information management practices. By leveraging automated solutions, organizations can significantly enhance their information management practices and reduce errors.

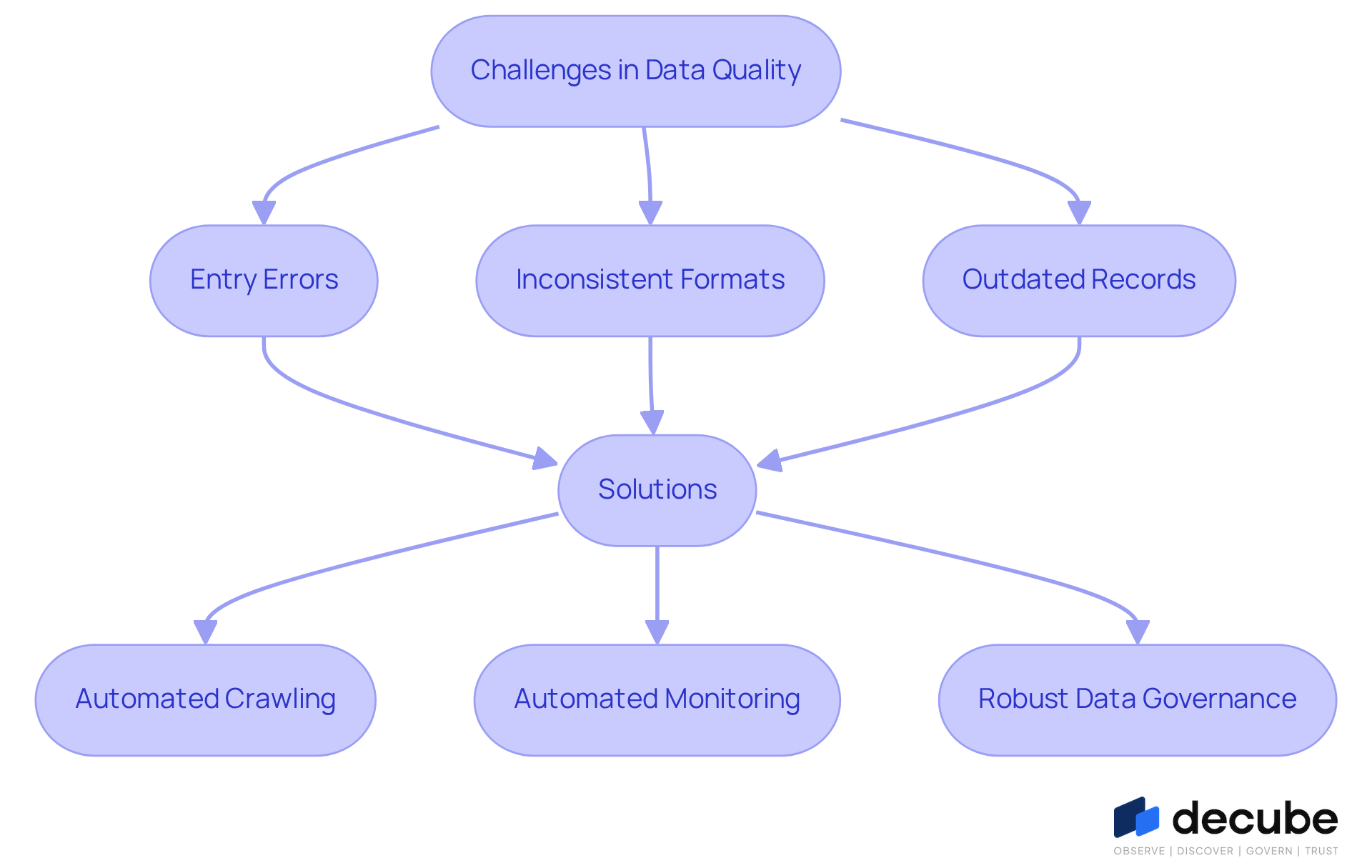

Discuss Common Challenges in Maintaining Data Quality

The pursuit of high-quality information is fraught with challenges, such as entry errors, inconsistent formats, and outdated records. Errors in data collection can compromise the integrity of information, while varied sources may result in inconsistencies that complicate integration. Furthermore, as organizations expand and gather more information, ensuring that all data remains current and relevant becomes increasingly difficult.

To tackle these challenges, information engineers can utilize the automated crawling feature, which ensures that metadata is automatically updated when sources are linked, considerably decreasing the chance of outdated records. Moreover, the platform's automated monitoring features enhance information observability, enabling teams to swiftly detect and address issues within reports and dashboards.

By implementing robust data governance frameworks, automating data quality checks, and utilizing Decube's tools for secure access control and designated approval flows, organizations can proactively manage these issues. By addressing these challenges, organizations can ensure their analytics remain reliable and actionable.

Conclusion

The integrity of data quality is a pivotal concern for data engineers, influencing the accuracy of analytics and decision-making processes. High-quality information serves as the foundation for reliable insights that guide business strategies, making its maintenance crucial. By understanding the core dimensions of data quality - accuracy, completeness, consistency, timeliness, and validity - data engineers can uphold information integrity throughout its lifecycle.

Key insights from the article highlight the critical challenges faced in maintaining data quality. Data engineers often grapple with entry errors and outdated records, which complicate the maintenance of data quality. However, solutions are available to overcome these obstacles. Implementing automated tools, such as those provided by Decube, can significantly enhance the management of data quality by ensuring timely updates and facilitating governance. Furthermore, organizations that prioritize data quality risk making decisions based on unreliable information, which can lead to strategic missteps.

Ultimately, investing in data quality not only safeguards information integrity but also positions organizations to thrive in a data-driven landscape. By embracing robust data governance frameworks and leveraging advanced monitoring solutions, organizations can ensure that their data remains accurate and actionable. As the data landscape continues to evolve, investing in data quality will empower organizations to harness the full potential of their information assets and drive successful outcomes in an increasingly complex environment.

Frequently Asked Questions

What does data quality mean?

Data quality refers to how accurately, completely, consistently, and relevantly information serves its intended purpose. It encompasses dimensions such as accuracy, completeness, consistency, timeliness, and validity.

Why is high-quality information important?

High-quality information is crucial for effective decision-making, ensuring that insights from analysis are reliable and actionable. It helps to avoid errors that can propagate through information pipelines, leading to flawed analytics and misguided business choices.

How does information integrity relate to AI?

In the age of AI, maintaining information integrity is vital for organizations to leverage AI technologies effectively. A study indicated that 61% of companies see information integrity as a significant challenge, emphasizing the need for robust governance frameworks to uphold standards of accuracy and completeness.

What are the consequences of inadequate information standards in AI initiatives?

Gartner forecasts that 60% of AI initiatives will be abandoned by 2026 due to inadequate information standards, highlighting the critical nature of maintaining high data quality.

How does Decube enhance information quality?

Decube enhances information quality through its integrated platform, which offers automated monitoring and governance features. Its automated crawling capability manages metadata efficiently, ensuring that information remains accurate and consistent.

What is the significance of the lineage feature in Decube?

The lineage feature illustrates the entire information flow across components, providing clarity in information pipelines. This fosters collaboration among teams and bolsters the reliability of the information being utilized.

Why are the dimensions of information quality important for AI information management?

Dimensions like accuracy and completeness are crucial for AI models to make reliable predictions and ensure datasets reflect the real world. Inconsistent information can lead to significant errors in AI outputs, such as biased decision-making processes.

What strategies can organizations implement to enhance information quality?

Organizations can implement strategies such as regular audits and validation procedures to mitigate biases and enhance the overall quality of their information assets.

Can you provide an example of improved outcomes from enhanced information management?

IBM Watson Health achieved a 15% increase in precision for cancer diagnoses after refining its information management practices, demonstrating the impact of organized and well-maintained information on critical fields like healthcare.

What are the risks of not prioritizing information quality?

Failing to prioritize information quality could lead organizations to miss critical opportunities in the evolving AI landscape and hinder their ability to make informed decisions and improve operational efficiency.

List of Sources

- Define Data Quality: Understanding Its Core Meaning

- Data Quality is Not Being Prioritized on AI Projects, a Trend that 96% of U.S. Data Professionals Say Could Lead to Widespread Crises (https://qlik.com/us/news/company/press-room/press-releases/data-quality-is-not-being-prioritized-on-ai-projects)

- Data Quality in the AI Era Why It’s More Critical Than Ever (https://gleecus.com/blogs/data-quality-in-ai-era)

- Why data quality is key to AI success in 2026 (https://strategy.com/software/blog/why-data-quality-is-key-to-ai-success-in-2026)

- Data Management Trends in 2026: Moving Beyond Awareness to Action - Dataversity (https://dataversity.net/articles/data-management-trends)

- Explain the Importance of Data Quality for Data Engineers

- prnewswire.com (https://prnewswire.com/news-releases/data-priorities-2026-ai-adoption-exposes-gaps-in-data-quality-governance-and-literacy-says-info-tech-research-group-in-new-report-302672864.html)

- New Global Research Points to Lack of Data Quality and Governance as Major Obstacles to AI Readiness (https://prnewswire.com/news-releases/new-global-research-points-to-lack-of-data-quality-and-governance-as-major-obstacles-to-ai-readiness-302251068.html)

- The Alarming Cost Of Poor Data Quality (https://montecarlodata.com/blog-the-cost-of-poor-data-quality)

- Data Quality is Not Being Prioritized on AI Projects, a Trend that 96% of U.S. Data Professionals Say Could Lead to Widespread Crises (https://qlik.com/us/news/company/press-room/press-releases/data-quality-is-not-being-prioritized-on-ai-projects)

- Understanding the Impact of Bad Data - Dataversity (https://dataversity.net/articles/putting-a-number-on-bad-data)

- Identify Key Characteristics and Dimensions of Data Quality

- Understanding the eight dimensions of data quality (https://datafold.com/data-quality-guide/what-is-data-quality)

- The 6 Data Quality Dimensions with Examples | Collibra | Collibra (https://collibra.com/blog/the-6-dimensions-of-data-quality)

- What makes high-quality news? | Vanessa Otero posted on the topic | LinkedIn (https://linkedin.com/posts/vanessaotero_how-do-we-define-high-quality-news-heck-activity-7396321593439920128-7zQ1)

- Why Is Data Journalism Important? (https://datajournalism.com/read/handbook/one/introduction/why-is-data-journalism-important)

- How data journalists can create beautiful feature stories (https://shorthand.com/the-craft/how-data-journalists-create-beautiful-feature-stories)

- Discuss Common Challenges in Maintaining Data Quality

- New Global Research Points to Lack of Data Quality and Governance as Major Obstacles to AI Readiness (https://prnewswire.com/news-releases/new-global-research-points-to-lack-of-data-quality-and-governance-as-major-obstacles-to-ai-readiness-302251068.html)

- Data Priorities 2026: AI Adoption Exposes Gaps in Data Quality, Governance, and Literacy, Says Info-Tech Research Group in New Report (https://finance.yahoo.com/news/data-priorities-2026-ai-adoption-190600933.html)

- Data Quality Challenges: Enterprise Strategies in 2025 (https://alation.com/blog/data-quality-challenges-large-scale-data-environments)

- Data Quality Issues: 6 Solutions for Enterprises in 2026 (https://actian.com/data-quality-issues-6-solutions-for-enterprises)

- Top Data Quality Challenges and Strategies to Overcome Them (https://gleecus.com/blogs/data-quality-challenges-and-solutions)

_For%20light%20backgrounds.svg)