Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

10 Essential Data Schema Examples for Effective Database Design

Discover 10 essential data schema examples to enhance your database design and management.

Introduction

The landscape of database design is evolving rapidly, driven by the necessity for organizations to manage vast amounts of data efficiently and effectively. The emergence of data observability tools and advanced schema management techniques underscores the critical importance of well-structured data schemas. This article examines ten essential data schema examples that not only enhance database performance but also ensure data integrity and compliance. Organizations can leverage these schemas to optimize their data management strategies and navigate the complexities of modern information environments.

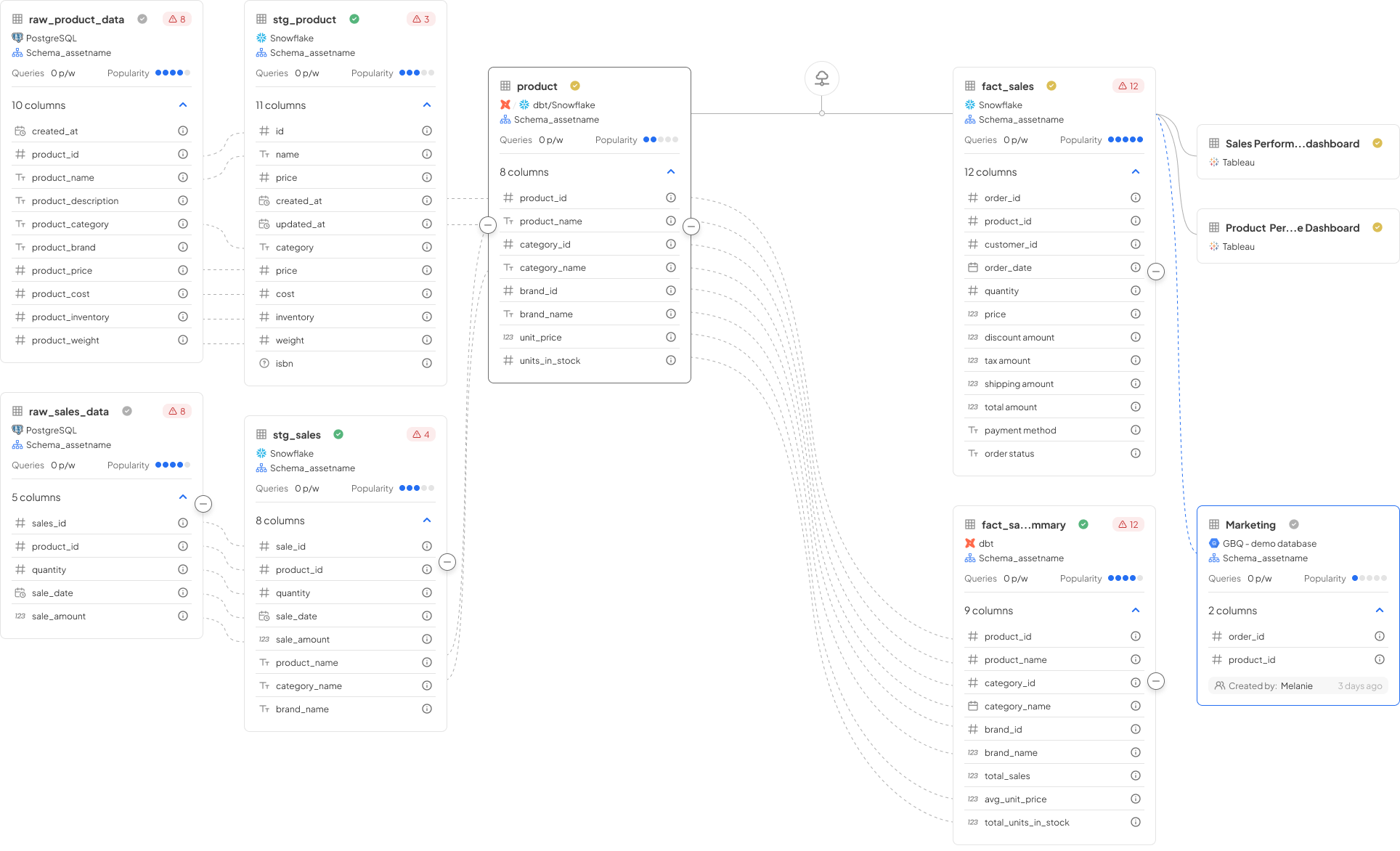

Decube: Elevate Database Design with Comprehensive Data Observability

Decube provides a robust observability platform that enhances database design by ensuring information integrity and reliability. Its features, such as real-time monitoring and machine learning-powered anomaly detection, empower engineers to identify and resolve issues within database schemas proactively. This proactive approach is essential for maintaining high information quality, which is fundamental to effective database management. By integrating observability into the design process, organizations can ensure their databases are not only functional but also optimized for performance and compliance. The incorporation of real-time monitoring facilitates the prompt identification of anomalies, thereby minimizing the risk of quality issues that could lead to costly operational disruptions.

Relational Model: Structuring Data for Efficient Access and Management

The relational model effectively organizes information into tables, known as relations, which consist of rows and columns. This structure facilitates efficient access and management of data. Each table corresponds to a specific entity, and relationships between tables are established through foreign keys. Such a design not only streamlines information retrieval but also significantly enhances data integrity by enforcing constraints that prevent invalid entries. For instance, entities utilizing relational databases have reported enhancements of up to 30% in information integrity metrics, underscoring the critical role of foreign keys in maintaining accurate and reliable datasets.

As organizations increasingly rely on scalable solutions, the relational model becomes essential for long-term information management success. It aligns with contemporary best practices that emphasize normalization, proper indexing, and the strategic application of foreign keys to ensure robust relationships. By 2026, adopting these principles will be vital for organizations seeking to optimize their database performance and scalability.

Moreover, integrating Decube's advanced quality monitoring features, such as machine learning-powered tests and intelligent alerts, further strengthens this framework by ensuring that information remains trustworthy and reliable. The importance of information agreements in this context is paramount, as they foster collaboration among stakeholders, transforming raw data into dependable assets and reinforcing the significance of governance and observability in modern management.

Star Schema: Optimizing Data Warehousing for Performance and Simplicity

The star structure is a modeling method that organizes information into a central fact table surrounded by dimension tables. This design simplifies complex queries by minimizing the number of joins required, thereby enhancing query performance. The star structure proves particularly efficient for analytical tasks, as it enables rapid information retrieval and straightforward reporting. By implementing a data schema example such as a star schema, organizations can optimize their warehousing processes, facilitating the generation of insights that guide business decisions.

In the context of Decube's automated crawling feature, organizations can ensure that their metadata remains continuously updated without manual intervention, significantly enhancing information observability and governance. This capability allows engineers to maintain precise and secure access control over their assets. For instance, a retail company utilizing Decube's automated column-level lineage has reported improved collaboration among business users, who can swiftly identify issues within reports and dashboards. This integration of information cataloging and observability not only fosters trust in quality but also empowers teams to make informed decisions based on reliable insights.

Snowflake Schema: Enhancing Data Normalization and Query Efficiency

The snowflake structure enhances the star structure by normalizing dimension tables into several interconnected sub-tables. This approach significantly reduces redundancy and improves data integrity. Such an organized method is particularly beneficial in complex information environments where maintaining accuracy and consistency is crucial.

Although the snowflake structure may necessitate additional joins, it ultimately boosts query efficiency and optimizes data storage. Organizations that prioritize data normalization and integrity have successfully implemented the snowflake structure, leading to improved performance in their database design strategies.

Furthermore, the integration of data agreements within decentralized information frameworks fosters collaboration among stakeholders, ensuring that quality and trust remain paramount. Decube's automated crawling feature complements the snowflake structure by streamlining metadata management. It enhances data observability and governance through automatic source refreshes and controlled access with designated approval flows.

This synergy enables database architects to leverage the snowflake schema's capacity to support hierarchical relationships and detailed analysis, making it an invaluable tool for effectively managing complex datasets.

Hierarchical Model: Structuring Data in a Tree-Like Format for Clarity

The hierarchical model organizes data in a tree-like structure, where each record has a single parent or root node. This design effectively illustrates one-to-many relationships, making it ideal for applications such as organizational charts, file systems, and inventory tracking systems. For example, in inventory management, items can be categorized under parent nodes representing categories, which facilitates efficient tracking of quantities and locations.

One of the key benefits of the hierarchical model is its simplicity and speed of access, enabling rapid information retrieval due to its organized format. However, this model has limitations; adding new relationships or altering existing ones can be cumbersome, as it lacks the flexibility found in more complex structures. Information management experts note that the tree-like structure ensures clear parent-child relationships, promoting integrity and consistency.

Decube's Automated Crawling feature addresses some of these limitations by automating the updating of metadata, ensuring that information remains current and relevant without manual intervention. This capability enhances information observability and governance, allowing entities to manage their hierarchical information structures more effectively.

Applications benefiting from hierarchical information models include:

- Financial record management, where accounts serve as nodes with transactions as child nodes

- Geographical organization, where countries act as parent nodes with states and cities as subdivisions

These examples serve as a data schema example to illustrate how hierarchical models can effectively manage structured information while addressing specific organizational needs. Furthermore, hierarchical models systematically tackle issues of high variance and high bias in analysis, enabling a nuanced understanding of dynamics. This versatility underscores their ability to provide deeper insights into complex phenomena by considering multiple levels of influence simultaneously.

Flat Model: Simplifying Data Storage with Single Table Structures

The flat model consists of a single table structure that organizes information in a straightforward manner. This design is easy to implement and manage, making it particularly suitable for small datasets or applications where complex relationships are not required. For instance, a CSV file for contacts exemplifies how flat file databases can efficiently arrange simple datasets, facilitating quick access and handling.

While flat models offer simplicity and speed, they can lead to redundancy and inconsistencies if not managed with care. As noted by information engineers, "effective management practices are essential to reduce redundancy and ensure dependable access speeds." Organizations should consider the flat model in scenarios where complexity is minimal and rapid access is prioritized, such as utilizing JSON configuration files for application settings. However, without diligent oversight, duplicate records may arise, jeopardizing information integrity.

In summary, the flat model serves as an efficient solution for entities that prioritize ease of use and swift retrieval in their information management strategies.

Network Model: Facilitating Complex Relationships in Data Structures

The network model is specifically designed to represent many-to-many relationships through a graph structure composed of nodes and links. This architecture offers enhanced flexibility in information representation compared to conventional hierarchical models, which were developed to address constraints in modeling complex relationships. The network model proves particularly effective for applications requiring the modeling of intricate relationships, such as social networks and organizational frameworks.

While implementing and managing the network model can present challenges - such as the necessity for a comprehensive understanding of the information structure for optimal efficiency - it provides a robust framework for entities aiming to effectively capture complex relationships. As noted by Kandi Brian, the network model allows each record to have multiple parents, thereby facilitating many-to-many relationships that are essential in dynamic environments. This capability is crucial for organizations like social media platforms and collaborative tools, where interactions extend beyond simple pairwise connections to involve multiple entities engaging in various ways.

Furthermore, advancements in statistical methods for analyzing complex network structures further enhance the utility of the network model in real-world applications.

Schema Validation: Ensuring Data Integrity and Compliance in Design

Schema validation is a critical procedure that ensures information aligns with a predefined structure and set of rules prior to processing or storage. This practice is vital for maintaining information integrity and ensuring compliance with regulatory standards. In 2026, adherence rates to validation practices have shown significant improvement, reflecting a growing recognition of its importance among organizations. By implementing robust structure validation, companies can effectively mitigate information errors and inconsistencies, thereby ensuring that their databases remain reliable and credible.

Effective structure validation practices not only enhance information quality but also play a pivotal role in overall information governance initiatives. For instance, organizations that have adopted structure enforcement tools report a notable reduction in inaccuracies and errors in downstream analysis. These tools facilitate real-time detection of structure violations, enabling teams to address issues proactively and maintain high standards of information quality.

Experts emphasize that data structure validation is not merely a technical requirement but a business imperative. It guarantees that information traversing systems adheres to established standards, thereby promoting compliance with regulations such as GDPR and HIPAA. Organizations that utilize structure validation have experienced improvements in collaboration among teams, as it provides a unified source of truth for standards, ultimately leading to more reliable decision-making.

For example, organizations employing structure enforcement layers have successfully standardized their information practices, preventing low-quality information from infiltrating downstream systems. This proactive approach to information management not only enhances operational efficiency but also instills confidence in the data used for critical business decisions. As institutions continue to navigate complex regulatory landscapes, the importance of structure validation for compliance will only grow.

Data Governance: Establishing Policies for Effective Schema Management

Data governance encompasses the establishment of policies and frameworks that dictate the oversight, protection, and utilization of information within an organization. By implementing robust governance policies, companies can ensure that their data schema example is designed and maintained in accordance with industry standards and regulatory requirements. Decube's automated crawling capability enhances this process by providing seamless metadata management, automatically refreshing information sources without the need for manual updates. Additionally, secure access control allows organizations to manage who can view or edit information, further strengthening information governance.

This structured approach not only improves information quality but also mitigates risks associated with mismanagement, ultimately leading to enhanced decision-making processes. A well-defined governance framework is essential for preserving the integrity of information assets, serving as a foundational element for effective database management. For instance, organizations that prioritize governance often report significant improvements in information accuracy and compliance, illustrating the tangible benefits of a strategic governance approach.

User insights, such as those from Piyush P., highlight how Decube's automated column-level lineage provides an optimal blend of cataloging and observability, enabling business users to quickly identify issues in reports and dashboards. Industry experts emphasize that establishing clear policies is vital for fostering a culture of accountability and trust in information handling, which is crucial for navigating the complexities of modern information environments.

Data Cataloging: Organizing and Managing Data Assets for Better Access

Cataloging information involves systematically inventorying a company's assets, significantly improving access and management. By centralizing information in a catalog, organizations can simplify information discovery, enhance collaboration, and ensure compliance with governance policies. Effective cataloging practices empower teams to locate and utilize information more efficiently, ultimately driving informed decision-making and fostering an information-driven culture. As organizations generate increasing amounts of information, robust cataloging solutions become essential for maintaining information quality and accessibility.

For instance, organizations that leverage extensive information catalogs can reduce information discovery time by up to 60%, leading to substantial annual savings of approximately $1.84 million. Additionally, institutions like the Children’s Hospital of Philadelphia have successfully implemented information catalogs, such as their branded catalog 'Gene', to provide clear lineage and governance signals. This ensures that teams can trace the sourcing of information and understand applicable policies, thereby accessing AI-ready resources that reflect real-world complexities.

With Decube's advanced information quality monitoring features - including preset field monitors, machine learning-powered tests that automatically detect quality thresholds, and smart alerts that reduce notification overload - organizations can enhance their governance and observability. This evolution in data management highlights the critical role of data catalogs in promoting operational efficiency and compliance in today's data-centric landscape.

Conclusion

The exploration of essential data schema examples underscores the critical role of structured database design in effective information management. By utilizing various models - such as relational, star, snowflake, hierarchical, flat, and network schemas - organizations can customize their database structures to meet specific requirements, thereby enhancing data accessibility, integrity, and performance.

Key insights throughout this article highlight the necessity of integrating observability tools like Decube to strengthen database design. Features such as real-time monitoring, schema validation, and automated metadata management not only enhance data quality but also ensure compliance with governance standards. Each schema type presents unique advantages, ranging from the simplicity of flat models to the complexity-handling capabilities of network models. This illustrates that the appropriate choice of schema can lead to significant operational efficiencies.

In a data-driven environment, the imperative is clear: organizations must prioritize effective database design by selecting the schema that aligns with their operational needs. By embracing best practices and leveraging advanced tools like Decube, organizations will not only improve data management but also empower teams to make informed decisions based on reliable insights. The future of database management hinges on understanding and implementing these essential data schemas to successfully navigate the complexities of modern information landscapes.

Frequently Asked Questions

What is Decube and what does it offer for database design?

Decube is an observability platform that enhances database design by ensuring information integrity and reliability through features such as real-time monitoring and machine learning-powered anomaly detection.

How does Decube help in maintaining high information quality?

By integrating observability into the design process, Decube allows organizations to proactively identify and resolve issues within database schemas, minimizing the risk of quality issues that could lead to operational disruptions.

What is the relational model and how does it organize data?

The relational model organizes information into tables (relations) consisting of rows and columns, facilitating efficient access and management of data while enforcing constraints through foreign keys to enhance data integrity.

What benefits do organizations experience by using the relational model?

Organizations have reported enhancements of up to 30% in information integrity metrics by utilizing the relational model, which is essential for long-term information management success.

What is a star schema and how does it optimize data warehousing?

A star schema is a modeling method that organizes data into a central fact table surrounded by dimension tables, simplifying complex queries and enhancing query performance, particularly for analytical tasks.

How does Decube's automated crawling feature contribute to data management?

Decube's automated crawling feature ensures that metadata remains continuously updated without manual intervention, enhancing information observability and governance, and allowing for precise access control over assets.

What role do information agreements play in database management?

Information agreements foster collaboration among stakeholders, transforming raw data into reliable assets and reinforcing the significance of governance and observability in modern management.

List of Sources

- Decube: Elevate Database Design with Comprehensive Data Observability

- Decube Secures $3.0M Seed (https://trysignalbase.com/news/funding/decube-secures-30m-seed)

- Decube - 2026 Company Profile & Team - Tracxn (https://tracxn.com/d/companies/decube/__ZkriPEzzpmdbo73QJs7DD47FgSjOyR1uIv45CtNCLIQ)

- Data Observability Market Report 2026 - Research and Markets (https://researchandmarkets.com/reports/6076291/data-observability-market-report?srsltid=AfmBOoqV_0fhDciI91PxJALuxPnq30Jq9j6DVMvdRoI7lk9Z1DURwT6I)

- Enterprise Data Observability Software Market | Global Market Analysis Report - 2036 (https://futuremarketinsights.com/reports/enterprise-data-observability-software-market)

- Data Quality Improvement Stats from ETL – 50+ Key Facts Every Data Leader Should Know in 2026 (https://integrate.io/blog/data-quality-improvement-stats-from-etl)

- Relational Model: Structuring Data for Efficient Access and Management

- Data in 2026: Interchangeable Models, Clouds, and Specialization (https://thenewstack.io/data-in-2026-interchangeable-models-clouds-and-specialization)

- Why 2026 Will Redefine Data Engineering as an AI-Native Discipline (https://cdomagazine.tech/opinion-analysis/why-2026-will-redefine-data-engineering-as-an-ai-native-discipline)

- Data Platform News (January 2026) (https://linkedin.com/pulse/data-platform-news-january-2026-pawel-potasinski-wag6f)

- Gartner Announces Top Predictions for Data and Analytics in 2026 (https://gartner.com/en/newsroom/press-releases/2026-03-11-gartner-announces-top-predictions-for-data-and-analytics-in-2026)

- Database Development with AI in 2026 - Brent Ozar Unlimited® (https://brentozar.com/archive/2026/01/database-development-with-ai-in-2026)

- Star Schema: Optimizing Data Warehousing for Performance and Simplicity

- How to Implement Star Schema Design (https://oneuptime.com/blog/post/2026-01-30-star-schema-design/view)

- Best Data Warehouse Tools for Analytics in 2026 (https://ovaledge.com/blog/data-warehouse-tools)

- Star Schema Data Modeling: Why It Still Matters (and How It Compares to Snowflake) (https://medium.com/@gema.correa/star-schema-data-modeling-why-it-still-matters-and-how-it-compares-to-snowflake-81790c420f73)

- Star schemas: An efficient way to put your data to work (https://fivetran.com/learn/star-schema)

- Updating Data Architecture for 2026 with Informatica, Dataiku, Qlik, and CData (https://dbta.com/Editorial/News-Flashes/Updating-Data-Architecture-for-2026-with-Informatica-Dataiku-Qlik-and-CData-173717.aspx)

- Snowflake Schema: Enhancing Data Normalization and Query Efficiency

- Snowflake Schema: Efficiently structuring complex data for modern data warehouses | Vienesse Consulting (https://vienesse-consulting.de/en/snowflake-schema-komplexe-daten-effizient-strukturieren-fuer-moderne-data-warehouses)

- Star Schema vs Snowflake Schema: Key Differences & Examples (https://fivetran.com/learn/star-schema-vs-snowflake)

- Star Schema vs Snowflake in 2025: The Final Verdict (https://medium.com/techsutra/star-schema-vs-snowflake-in-2025-the-final-verdict-ddbb5a0cdcd9)

- Star Schema vs Snowflake Schema (https://datamites.com/blog/star-schema-vs-snowflake-schema?srsltid=AfmBOooBeoSYNA72NQGbp_0tlfjeqsk-VmcCP0Qd2J4gTaWE_uo1B7YL)

- Hierarchical Model: Structuring Data in a Tree-Like Format for Clarity

- Hierarchical Models for Data and Policy, and a Walk-through Tutorial! (https://medium.com/data-policy/hierarchical-models-for-data-and-policy-and-a-walk-through-tutorial-2341fd1b1a48)

- Scaling the Heights of Multi-Tenant SaaS with Hierarchical Data Models (https://linkedin.com/pulse/scaling-heights-multi-tenant-saas-hierarchical-data-models-goyal-mthuc)

- What Is a Hierarchical Database Model? Benefits & Use Cases (https://kyvosinsights.com/glossary/hierarchical-database-model)

- Hierarchical data models: a modern approach to organizing EDW data (https://ursahealth.com/new-insights/hierarchical-data-models)

- AI Trends 2026: Hierarchical Systems and Automation | Gaurav Sen posted on the topic | LinkedIn (https://linkedin.com/posts/gkcs_ai-trends-in-2026-derived-from-research-activity-7420171473732046848-x7qg)

- Flat Model: Simplifying Data Storage with Single Table Structures

- Flat File Database: Benefits, Limits & Real Examples (https://clustox.com/blog/flat-file-database)

- The Jedi Way of Data Engineering (Quotes to Help You Succeed) (https://medium.com/art-of-data-engineering/the-jedi-way-of-data-engineering-f798fe2efb7b)

- Flat File Database: Definition, Examples, Advantages, and Limitations (https://estuary.dev/blog/flat-file-database)

- Network Model: Facilitating Complex Relationships in Data Structures

- Understanding the Network Database Model | General Resources | MariaDB Documentation (https://mariadb.com/docs/general-resources/database-theory/understanding-the-network-database-model)

- Statistical Modeling for Complex Networks (https://ui.adsabs.harvard.edu/abs/2022nsf....2210439Z/abstract)

- Finding Hidden Connections in Complex Networks With Modern Graph Algorithms (https://medium.com/data-science-collective/finding-hidden-connections-with-modern-graph-algorithms-in-complex-networks-e13b54516b99)

- Network database model: a graph structure for complex relationships | Kandi Brian posted on the topic | LinkedIn (https://linkedin.com/posts/alicia-crowder-kandi_the-network-database-model-is-a-data-model-activity-7336859436222689281-2zEB)

- New Network Model Captures the Complexity of Real-World Relationships | Newswise (https://newswise.com/articles/new-network-model-captures-the-complexity-of-real-world-relationships)

- Schema Validation: Ensuring Data Integrity and Compliance in Design

- The Power of Schema Validation: Why Data Validation is Your Best Friend (https://medium.com/@lauragmukherjee/the-power-of-schema-validation-why-data-validation-is-your-best-friend-cc14ed16f06b)

- April 1st, 2026 — Gravic Publishes ‘The Connection’ Article: Ensuring Enterprise Data Integrity Across Platforms: The Growing Importance of Cross Platform Validation and the Power of Shadowbase Compare Plus - Shadowbase (https://shadowbasesoftware.com/featured/ensuring-enterprise-data-integrity)

- MetaRouter Introduces Schema Enforcement to Tackle Data Quality and Consistency Challenges (https://metarouter.io/press-media-hub/metarouter-introduces-schema-enforcement-to-tackle-data-quality-and-consistency-challenges)

- MetaRouter Introduces Schema Enforcement to Tackle Data Quality and Consistency Challenges (https://prnewswire.com/news-releases/metarouter-introduces-schema-enforcement-to-tackle-data-quality-and-consistency-challenges-302386462.html)

- digna 2026.01 Expands Enterprise Data Validation Inside the Database (https://einpresswire.com/article/900186705/digna-2026-01-expands-enterprise-data-validation-inside-the-database)

- Data Governance: Establishing Policies for Effective Schema Management

- How to Build a Data Governance Framework That Actually Works in 2026 (https://workingexcellence.com/data-governance-framework-2026)

- Data governance in 2026: Benefits, business alignment, and essential need - DataGalaxy (https://datagalaxy.com/en/blog/data-governance-in-2026-benefits-business-alignment-and-essential-need)

- Top 12 Data Governance predictions for 2026 - hyperight.com (https://hyperight.com/top-12-data-governance-predictions-for-2026)

- Establishing Data Governance Frameworks to Enhance Cybersecurity – Brilliance Security Magazine (https://brilliancesecuritymagazine.com/cybersecurity/establishing-data-governance-frameworks-to-enhance-cybersecurity)

- Enterprise Data Governance: A Complete Modern Framework (https://databricks.com/blog/enterprise-data-governance-complete-modern-framework)

- Data Cataloging: Organizing and Managing Data Assets for Better Access

- What Is a Data Catalog? Importance, Benefits & Features | Alation (https://alation.com/blog/what-is-a-data-catalog)

- Understanding Data Cataloging: A Key to Efficient Data Management (https://actian.com/data-cataloging)

- Data Catalog in 2026 - Why It is a Must Have for Your Enterprise Data (https://sganalytics.com/blog/data-catalog-2026-for-enterprise-data)

- Data Catalogs in 2026: Definitions, Trends, and Best Practices for Modern Data Management (https://promethium.ai/guides/data-catalogs-2026-guide-modern-data-management)

- Data Catalog for AI: Capabilities, Uses & Tooling in 2026 (https://atlan.com/know/data-catalog-for-ai)

_For%20light%20backgrounds.svg)