Kindly fill up the following to try out our sandbox experience. We will get back to you at the earliest.

Understanding Data Discovery Meaning: Definition and Key Insights

Discover the meaning of data discovery, its importance, and real-world applications.

Introduction

In an era characterized by information overload, mastering data discovery is essential for informed decision-making. This article examines the definition and importance of data discovery, exploring how systematic information exploration can transform raw data into actionable insights that drive organizational success.

As organizations navigate the complexities of data management, they must consider how to effectively leverage data discovery techniques to not only comply with regulations but also gain a competitive edge in their respective industries.

Failing to leverage data discovery effectively can result in organizations losing their competitive edge.

Define Data Discovery: Core Concept and Importance

Information exploration is a systematic method for identifying, gathering, and analyzing information from various sources to uncover patterns, trends, and insights that guide decision-making. Organizations today face significant challenges in managing vast amounts of information, necessitating systematic exploration methods. By utilizing information exploration techniques, businesses can convert raw data into strategic insights that drive decision-making, significantly improving operational efficiency and strategic planning.

The significance of information exploration is emphasized by its capacity to enhance governance and quality. Efficient information retrieval processes aid in adhering to regulations like GDPR and HIPAA, guaranteeing that sensitive details are handled securely. Automated platforms such as Decube can recognize and categorize personally identifiable information (PII), assisting organizations in upholding compliance and reducing risks linked to breaches.

Consider how companies have leveraged information exploration to achieve remarkable results. Slevomat, a discount portal, saw a 23% rise in sales after adopting a metrics-based decision-making framework that optimized their information management processes. Similarly, The North Face employs consumer information through its mobile app to offer tailored shopping experiences, boosting customer satisfaction and loyalty.

Furthermore, information exploration plays a vital role in business decision-making. Organizations that adopt data-driven cultures are 19 times more likely to be profitable and six times more likely to retain customers. This emphasizes the importance of incorporating information exploration into strategic planning to encourage innovation and adaptability to market changes. Ultimately, the integration of information exploration into business strategies is essential for sustained growth and competitive advantage.

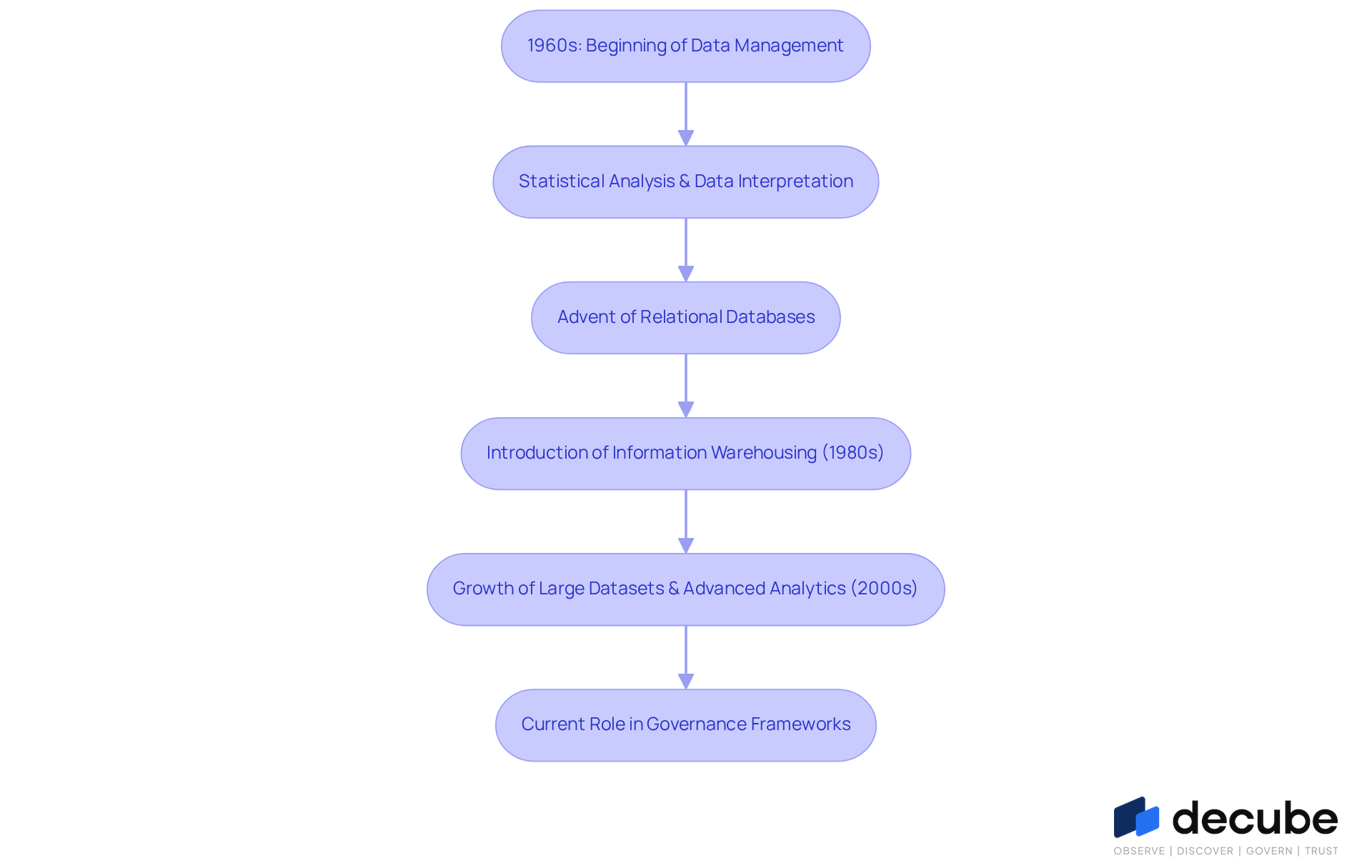

Explore the Evolution of Data Discovery: Historical Context

The evolution of information exploration reflects a response to the growing complexity of data management since the 1960s. The development of information mining techniques focused on statistical analysis and data interpretation. As information environments became more intricate, the advent of relational databases marked a pivotal advancement in data management, improving the capability to handle and query information efficiently. The introduction of information warehousing in the 1980s significantly increased the demand for effective insight extraction methods, as companies aimed to obtain knowledge from vast datasets for informed business intelligence. By the 2000s, the rapid growth of large datasets, coupled with advanced analytics tools, has made information exploration a dynamic and interactive process, allowing users to visually examine information and derive insights in real-time. Today, information exploration plays a vital role within governance frameworks, enabling entities to effectively manage compliance and uphold quality standards. This ongoing evolution underscores the necessity for organizations to embrace advanced information management strategies to thrive in today's data-driven landscape.

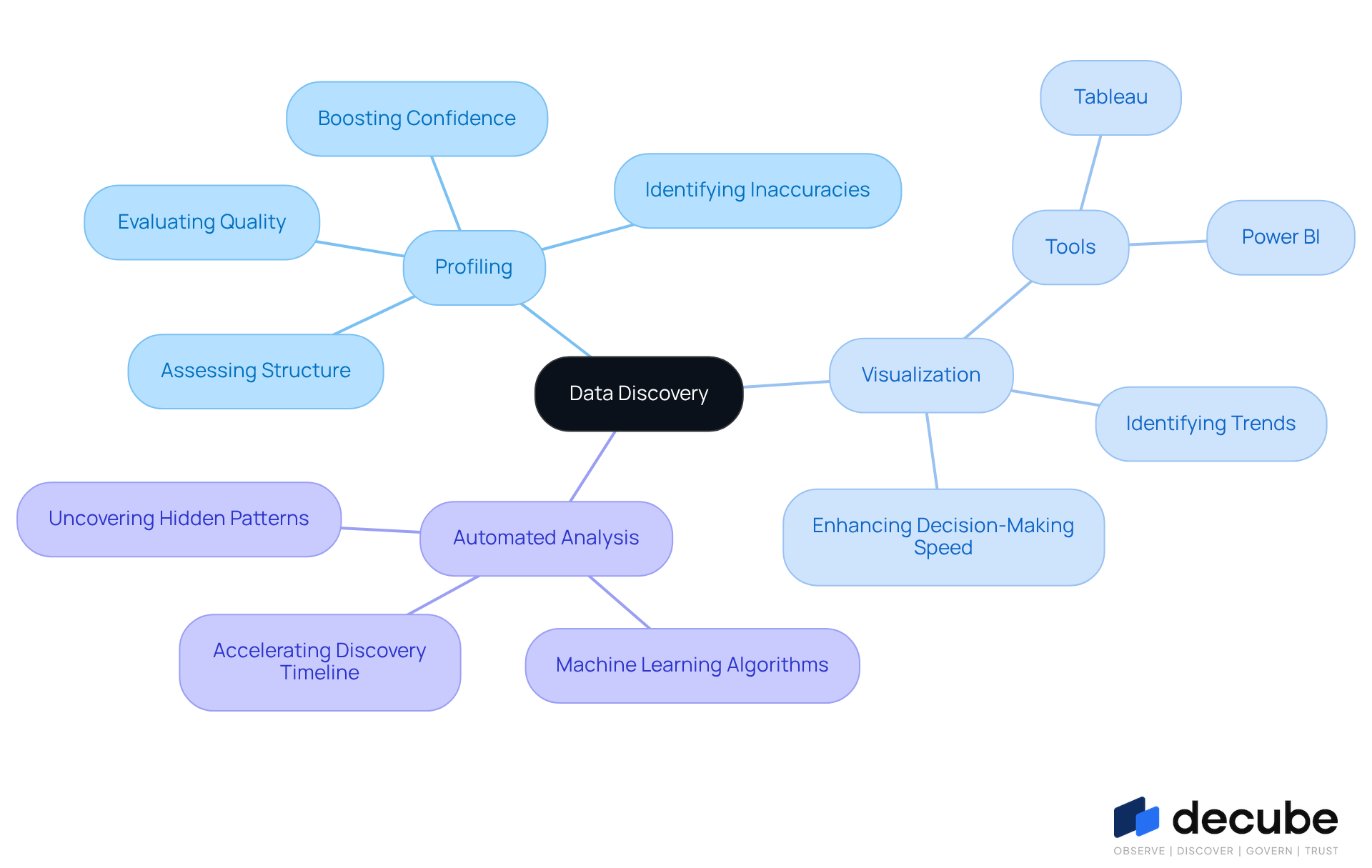

Identify Key Characteristics of Data Discovery: Processes and Methods

In an era where data is abundant, understanding data discovery meaning and effectively discovering and analyzing information is paramount for organizational success. The key characteristics of effective data discovery meaning include:

- Profiling

- Visualization

- Automated analysis

Each plays a crucial role in enhancing data-driven decision-making.

Information profiling is vital for evaluating the quality and structure of information, ensuring its reliability for later analysis. This method not only identifies inaccuracies but also boosts confidence in the information being utilized.

Visualization tools, such as Tableau and Power BI, enable users to interactively examine information, aiding in the discovery of trends and anomalies that might otherwise remain concealed. Research indicates that organizations utilizing effective information visualization techniques can improve their decision-making speed by up to fivefold, underscoring the critical importance of these tools in the exploration process.

Automated information analysis employs machine learning algorithms to uncover hidden patterns and insights, illustrating the data discovery meaning by significantly accelerating the timeline for discovery without requiring manual intervention.

Furthermore, monitoring information lineage is essential for understanding the flow of information within an organization, ensuring transparency and compliance with regulatory standards. As a result, organizations that leverage these methodologies can significantly enhance their operational efficiency and strategic outcomes, allowing them to make informed, evidence-based decisions with confidence.

Illustrate Data Discovery Applications: Real-World Use Cases

In an era where data integrity is paramount, data exploration emerges as a critical tool across industries, especially in healthcare and financial services. In the financial sector, organizations leverage information exploration to detect fraudulent transactions by analyzing transaction patterns. For instance, credit card companies employ these tools to identify unusual spending behaviors that may indicate fraud, substantially improving the accuracy and speed of fraud detection efforts.

In healthcare, information exploration is vital for compliance oversight, enabling organizations to monitor sensitive patient information and ensure adherence to regulations such as HIPAA. This capability is essential as the healthcare sector faces increasing scrutiny regarding information privacy and security. Healthcare organizations often struggle to keep up with evolving regulations and the complexities of patient data management. By employing information exploration techniques, they can proactively identify compliance risks and address them before they escalate.

The incorporation of information discovery tools such as Decube facilitates real-time monitoring of data flows, ensuring that patient details are managed in accordance with regulatory standards. Decube's automated crawling feature enhances information observability by automatically refreshing metadata, simplifying the management of quality and governance. Users have noted that Decube's intuitive design and its ability to integrate smoothly with existing information systems have notably improved their management processes, enabling teams to effectively monitor quality and maintain trust.

These applications not only enhance operational efficiency but also foster innovation and competitive advantage in today's data-driven landscape, underscoring the importance of robust data governance frameworks. Ultimately, the strategic implementation of data exploration tools can redefine operational standards and elevate industry practices.

Conclusion

Organizations often struggle to effectively utilize their data due to a lack of systematic approaches, making understanding data discovery essential for success. This systematic approach helps organizations make informed decisions while improving operational efficiency and ensuring compliance with regulations. By integrating data discovery into their strategies, businesses can transform raw data into actionable insights that drive growth and innovation.

The article highlights the evolution of data discovery from its historical roots to its current applications in various industries. Key characteristics such as:

- Profiling

- Visualization

- Automated analysis

are emphasized as essential processes that enhance data-driven decision-making. Real-world examples, including those from financial services and healthcare, illustrate how organizations leverage data discovery to improve operational efficiency, detect fraud, and ensure compliance with regulations.

Adopting robust data discovery practices is not just a strategic advantage; it is essential for thriving in a data-driven future. Organizations are encouraged to embrace these insights to unlock the full potential of their data assets and pave the way for sustained growth in an increasingly complex and data-rich environment.

Frequently Asked Questions

What is data discovery?

Data discovery is a systematic method for identifying, gathering, and analyzing information from various sources to uncover patterns, trends, and insights that guide decision-making.

Why is data discovery important for organizations?

Data discovery is important because it helps organizations manage vast amounts of information, convert raw data into strategic insights, improve operational efficiency, and enhance strategic planning.

How does data discovery enhance governance and quality?

Data discovery enhances governance and quality by improving information retrieval processes, which helps organizations comply with regulations like GDPR and HIPAA, ensuring sensitive information is handled securely.

What role do automated platforms play in data discovery?

Automated platforms, such as Decube, can recognize and categorize personally identifiable information (PII), assisting organizations in maintaining compliance and reducing risks associated with data breaches.

Can you provide examples of companies that have benefited from data discovery?

Yes, Slevomat, a discount portal, experienced a 23% increase in sales after optimizing their information management processes. The North Face uses consumer information from its mobile app to create tailored shopping experiences, enhancing customer satisfaction and loyalty.

How does data discovery impact business decision-making?

Data discovery significantly impacts business decision-making by fostering a data-driven culture, which makes organizations 19 times more likely to be profitable and six times more likely to retain customers.

What is the overall significance of integrating data discovery into business strategies?

Integrating data discovery into business strategies is essential for sustained growth and competitive advantage, as it encourages innovation and adaptability to market changes.

List of Sources

- Define Data Discovery: Core Concept and Importance

- 5 Stats That Show How Data-Driven Organizations Outperform Their Competition (https://keboola.com/blog/5-stats-that-show-how-data-driven-organizations-outperform-their-competition)

- Data discovery in 2026: definition, process and techniques (https://future-processing.com/blog/data-discovery-definition-process-techniques)

- Data-Driven Decision Making To Improve Business Outcomes (https://clootrack.com/blogs/data-driven-decision-making-improve-business-outcomes)

- Data Discovery Platforms: 8 Solutions to Know in 2026 | Dagster (https://dagster.io/learn/data-discovery-platform)

- Data Discovery, why it is important in Data Governance (https://ovaledge.com/blog/data-discovery-for-efficient-data-governance)

- Explore the Evolution of Data Discovery: Historical Context

- The Evolution of Data Discovery From Statistics to Data Security | Ground Labs (https://groundlabs.com/blog/the-evolution-of-data-discovery)

- 20 Data Science Quotes by Industry Experts (https://coresignal.com/blog/data-science-quotes)

- 19 Inspirational Quotes About Data | The Pipeline | ZoomInfo (https://pipeline.zoominfo.com/operations/19-inspirational-quotes-about-data)

- Data Discovery - Traditional Methods, Challenges & How AI Can Help | Collate Learning Center (https://getcollate.io/learning-center/data-discovery)

- Identify Key Characteristics of Data Discovery: Processes and Methods

- What Is Data Discovery? Process, Tools & Benefits (https://domo.com/glossary/data-discovery)

- What Is Data Discovery? Best Practices and How to Implement (https://snowflake.com/en/fundamentals/data-discovery)

- Data Discovery: Unveiling Insights for Informed Decision-Making (https://acceldata.io/blog/data-discovery)

- What is Data Discovery? Process, Methods & Why it Matters (https://fullstory.com/blog/what-is-data-discovery)

- The Importance of Data Visualization in Data Discovery (https://dbta.com/Editorial/Trends-and-Applications/The-Importance-of-Data-Visualization-in-Data-Discovery-109672.aspx)

- Illustrate Data Discovery Applications: Real-World Use Cases

- Big Data in Healthcare: Uses, Benefits, Challenges, and Real Examples (https://mghihp.edu/news-and-more/opinions/data-analytics/big-data-healthcare-uses-benefits-challenges-and-real-examples)

- 10 statistics for better fraud prevention in 2025 (https://alloy.com/blog/2025-financial-fraud-statistics)

- Fraud Detection & Prevention in Banking Market Report 2025-30: Size, Share, Trends (https://juniperresearch.com/research/fintech-payments/fraud-security/fraud-detection-prevention-banking-market-report)

- How AI is Transforming Healthcare Data Compliance (https://accountablehq.com/post/how-ai-is-transforming-healthcare-data-compliance)

- Fraud Detection And Prevention Market | Industry Report, 2030 (https://grandviewresearch.com/industry-analysis/fraud-detection-prevention-market)

_For%20light%20backgrounds.svg)